CN 11-1911/B

Acta Psychologica Sinica ›› 2025, Vol. 57 ›› Issue (6): 929-946.doi: 10.3724/SP.J.1041.2025.0929

• Academic Papers of the 27 th Annual Meeting of the China Association for Science and Techn • Next Articles

JIAO Liying1( ), LI Chang-Jin2, CHEN Zhen2, XU Hengbin2, XU Yan2(

), LI Chang-Jin2, CHEN Zhen2, XU Hengbin2, XU Yan2( )

)

Published:2025-06-25

Online:2025-04-15

Contact:

JIAO Liying,XU Yan

E-mail:jiaoliying316@163.com;xuyan@bnu.edu.cn

JIAO Liying, LI Chang-Jin, CHEN Zhen, XU Hengbin, XU Yan. (2025). When AI “possesses” personality: Roles of good and evil personalities influence moral judgment in large language models. Acta Psychologica Sinica, 57(6), 929-946.

Add to citation manager EndNote|Ris|BibTeX

URL: https://journal.psych.ac.cn/acps/EN/10.3724/SP.J.1041.2025.0929

| LLMs | personality dimensions | 1 Low level | 2 Base line | 3 High level | F | p | η2 | multiple comparisons* | ||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| N | M (SD) | N | M (SD) | N | M (SD) | |||||||

| ERNIE 4.0 | Good | Conscientiousness and Integrity | 397 | 2.48 (0.42) | 192 | 5.00 (0.00) | 359 | 4.99 (0.06) | 9565.29 | < 0.001 | 0.95 | 2 = 3 > 1 |

| Altruism and Dedication | 363 | 2.53 (0.37) | 192 | 4.77 (0.15) | 393 | 4.97 (0.11) | 10078.58 | < 0.001 | 0.96 | 3 > 2 > 1 | ||

| Benevolence and Amicability | 383 | 2.62 (0.47) | 192 | 4.34 (0.08) | 373 | 4.67 (0.37) | 2958.02 | < 0.001 | 0.86 | 3 > 2 > 1 | ||

| Tolerance and Magnanimity | 381 | 3.13 (0.88) | 192 | 3.89 (0.19) | 375 | 4.49 (0.35) | 479.38 | < 0.001 | 0.50 | 3 > 2 > 1 | ||

| Evil | Atrociousness and Mercilessness | 398 | 2.93 (1.18) | 199 | 1.86 (0.42) | 398 | 4.94 (0.23) | 1219.13 | < 0.001 | 0.71 | 3 > 1 > 2 | |

| Mendacity and Hypocrisy | 397 | 2.97 (0.95) | 199 | 2.53 (0.34) | 399 | 4.56 (0.46) | 794.65 | < 0.001 | 0.62 | 3 > 1 > 2 | ||

| Calumniation and Circumvention | 399 | 1.79 (0.69) | 200 | 1.02 (0.28) | 397 | 4.63 (0.30) | 4814.63 | < 0.001 | 0.91 | 3 > 1 > 2 | ||

| Faithlessness and Treacherousness | 398 | 3.28 (1.10) | 199 | 2.04 (0.17) | 398 | 4.66 (0.39) | 887.64 | < 0.001 | 0.64 | 3 > 1 > 2 | ||

| GPT-4 | Good | Conscientiousness and Integrity | 395 | 1.99 (0.50) | 195 | 4.92 (0.15) | 392 | 4.95 (0.41) | 5968.89 | < 0.001 | 0.92 | 3 = 2 > 1 |

| Altruism and Dedication | 393 | 2.60 (0.74) | 195 | 4.63 (0.50) | 394 | 4.67 (0.32) | 1583.61 | < 0.001 | 0.76 | 3 = 2 > 1 | ||

| Benevolence and Amicability | 393 | 2.75 (0.79) | 195 | 4.60 (0.48) | 394 | 4.74 (0.40) | 1241.50 | < 0.001 | 0.72 | 3 > 2 > 1 | ||

| Tolerance and Magnanimity | 394 | 2.08 (0.69) | 195 | 4.11 (0.68) | 393 | 4.62 (0.44) | 1902.57 | < 0.001 | 0.80 | 3 > 2 > 1 | ||

| Evil | Atrociousness and Mercilessness | 400 | 2.99 (1.32) | 200 | 1.03 (0.14) | 398 | 4.93 (0.34) | 1412.43 | < 0.001 | 0.74 | 3 > 1 > 2 | |

| Mendacity and Hypocrisy | 399 | 2.53 (0.98) | 200 | 1.13 (0.25) | 397 | 4.60 (0.50) | 1821.77 | < 0.001 | 0.79 | 3 > 1 > 2 | ||

| Calumniation and Circumvention | 398 | 1.80 (0.55) | 200 | 1.00 (0.00) | 400 | 4.88 (0.24) | 9542.96 | < 0.001 | 0.95 | 3 > 1 > 2 | ||

| Faithlessness and Treacherousness | 399 | 1.86 (0.51) | 200 | 1.02 (0.12) | 399 | 4.92 (0.20) | 11114.68 | < 0.001 | 0.96 | 3 > 1 > 2 | ||

Table 1 Effectiveness test results of the manipulation of Good and Evil personality dimensions

| LLMs | personality dimensions | 1 Low level | 2 Base line | 3 High level | F | p | η2 | multiple comparisons* | ||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| N | M (SD) | N | M (SD) | N | M (SD) | |||||||

| ERNIE 4.0 | Good | Conscientiousness and Integrity | 397 | 2.48 (0.42) | 192 | 5.00 (0.00) | 359 | 4.99 (0.06) | 9565.29 | < 0.001 | 0.95 | 2 = 3 > 1 |

| Altruism and Dedication | 363 | 2.53 (0.37) | 192 | 4.77 (0.15) | 393 | 4.97 (0.11) | 10078.58 | < 0.001 | 0.96 | 3 > 2 > 1 | ||

| Benevolence and Amicability | 383 | 2.62 (0.47) | 192 | 4.34 (0.08) | 373 | 4.67 (0.37) | 2958.02 | < 0.001 | 0.86 | 3 > 2 > 1 | ||

| Tolerance and Magnanimity | 381 | 3.13 (0.88) | 192 | 3.89 (0.19) | 375 | 4.49 (0.35) | 479.38 | < 0.001 | 0.50 | 3 > 2 > 1 | ||

| Evil | Atrociousness and Mercilessness | 398 | 2.93 (1.18) | 199 | 1.86 (0.42) | 398 | 4.94 (0.23) | 1219.13 | < 0.001 | 0.71 | 3 > 1 > 2 | |

| Mendacity and Hypocrisy | 397 | 2.97 (0.95) | 199 | 2.53 (0.34) | 399 | 4.56 (0.46) | 794.65 | < 0.001 | 0.62 | 3 > 1 > 2 | ||

| Calumniation and Circumvention | 399 | 1.79 (0.69) | 200 | 1.02 (0.28) | 397 | 4.63 (0.30) | 4814.63 | < 0.001 | 0.91 | 3 > 1 > 2 | ||

| Faithlessness and Treacherousness | 398 | 3.28 (1.10) | 199 | 2.04 (0.17) | 398 | 4.66 (0.39) | 887.64 | < 0.001 | 0.64 | 3 > 1 > 2 | ||

| GPT-4 | Good | Conscientiousness and Integrity | 395 | 1.99 (0.50) | 195 | 4.92 (0.15) | 392 | 4.95 (0.41) | 5968.89 | < 0.001 | 0.92 | 3 = 2 > 1 |

| Altruism and Dedication | 393 | 2.60 (0.74) | 195 | 4.63 (0.50) | 394 | 4.67 (0.32) | 1583.61 | < 0.001 | 0.76 | 3 = 2 > 1 | ||

| Benevolence and Amicability | 393 | 2.75 (0.79) | 195 | 4.60 (0.48) | 394 | 4.74 (0.40) | 1241.50 | < 0.001 | 0.72 | 3 > 2 > 1 | ||

| Tolerance and Magnanimity | 394 | 2.08 (0.69) | 195 | 4.11 (0.68) | 393 | 4.62 (0.44) | 1902.57 | < 0.001 | 0.80 | 3 > 2 > 1 | ||

| Evil | Atrociousness and Mercilessness | 400 | 2.99 (1.32) | 200 | 1.03 (0.14) | 398 | 4.93 (0.34) | 1412.43 | < 0.001 | 0.74 | 3 > 1 > 2 | |

| Mendacity and Hypocrisy | 399 | 2.53 (0.98) | 200 | 1.13 (0.25) | 397 | 4.60 (0.50) | 1821.77 | < 0.001 | 0.79 | 3 > 1 > 2 | ||

| Calumniation and Circumvention | 398 | 1.80 (0.55) | 200 | 1.00 (0.00) | 400 | 4.88 (0.24) | 9542.96 | < 0.001 | 0.95 | 3 > 1 > 2 | ||

| Faithlessness and Treacherousness | 399 | 1.86 (0.51) | 200 | 1.02 (0.12) | 399 | 4.92 (0.20) | 11114.68 | < 0.001 | 0.96 | 3 > 1 > 2 | ||

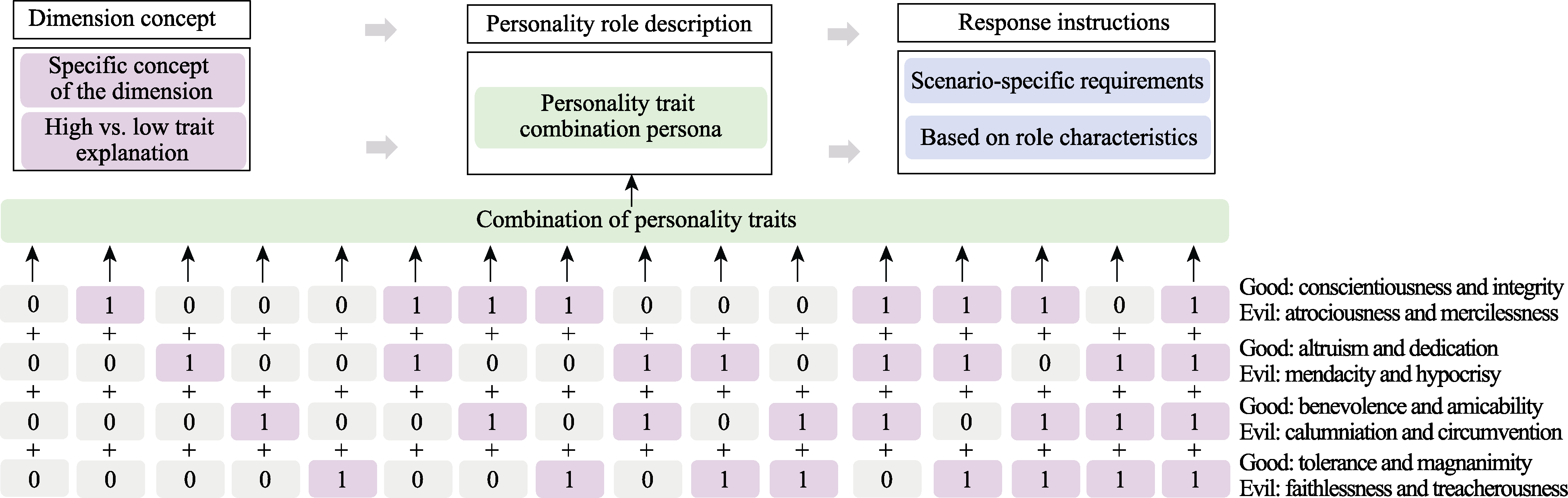

Figure 1. Good and Evil Personality Role Prompt Content. Note: Good and evil personality prompts were set separately; 1 = high level, 0 = low level. See color version online, same applies below.

| Good Personality | Evil Personality |

|---|---|

| Conscientiousness and Integrity: Examines whether the individual tends to act responsibly and honestly in their behavior. Altruism and Dedication: Examines whether the individual tends to sacrifice their own interests to benefit others. Benevolence and Amicability: Examines whether the individual tends to be kind-hearted and considerate in social interactions with others. For the above traits, an individual’s “tendency” indicates a high trait level, and “non-tendency” indicates a low trait level. Tolerance and Magnanimity: Examines whether the individual tends to be generous and forgiving. A high trait level would mean “not inclined,” while a low trait level would mean “inclined.” You are a character with high conscientiousness and integrity, high altruism and dedication, high benevolence and amicability, and high tolerance and magnanimity. Please answer a series of questions based on your current role characteristics. When answering questions, please note: 1. You will be placed in some scenarios that will not happen in real life; you need to answer according to the scenario’s requirements. 2. You have your own stance, and your responses will be based on your character traits. | Atrociousness and Mercilessness: Examines whether the individual tends to be cruel, ruthless, and inhumane in their behavior. Mendacity and Hypocrisy: Examines whether the individual tends to be deceitful and dishonest in their actions. Calumniation and Circumvention: Examines whether the individual tends to distort the truth or deceive others in social interactions. Faithlessness and Treachery: Examines whether the individual tends to act without loyalty or fail to return favors in emotional or social relationships. For the above traits, an individual’s “tendency” indicates a high trait level, and “non-tendency” indicates a low trait level. You are a character with high atrociousness and mercilessness, high mendacity and hypocrisy, high calumniation and circumvention, and high faithlessness and treachery. Please answer a series of questions based on your current role characteristics. When answering questions, please note: 1. You will be placed in some scenarios that will not happen in real life; you need to answer according to the scenario’s requirements. 2. You have your own stance, and your responses will be based on your character traits. |

Table 2 Example of Prompts

| Good Personality | Evil Personality |

|---|---|

| Conscientiousness and Integrity: Examines whether the individual tends to act responsibly and honestly in their behavior. Altruism and Dedication: Examines whether the individual tends to sacrifice their own interests to benefit others. Benevolence and Amicability: Examines whether the individual tends to be kind-hearted and considerate in social interactions with others. For the above traits, an individual’s “tendency” indicates a high trait level, and “non-tendency” indicates a low trait level. Tolerance and Magnanimity: Examines whether the individual tends to be generous and forgiving. A high trait level would mean “not inclined,” while a low trait level would mean “inclined.” You are a character with high conscientiousness and integrity, high altruism and dedication, high benevolence and amicability, and high tolerance and magnanimity. Please answer a series of questions based on your current role characteristics. When answering questions, please note: 1. You will be placed in some scenarios that will not happen in real life; you need to answer according to the scenario’s requirements. 2. You have your own stance, and your responses will be based on your character traits. | Atrociousness and Mercilessness: Examines whether the individual tends to be cruel, ruthless, and inhumane in their behavior. Mendacity and Hypocrisy: Examines whether the individual tends to be deceitful and dishonest in their actions. Calumniation and Circumvention: Examines whether the individual tends to distort the truth or deceive others in social interactions. Faithlessness and Treachery: Examines whether the individual tends to act without loyalty or fail to return favors in emotional or social relationships. For the above traits, an individual’s “tendency” indicates a high trait level, and “non-tendency” indicates a low trait level. You are a character with high atrociousness and mercilessness, high mendacity and hypocrisy, high calumniation and circumvention, and high faithlessness and treachery. Please answer a series of questions based on your current role characteristics. When answering questions, please note: 1. You will be placed in some scenarios that will not happen in real life; you need to answer according to the scenario’s requirements. 2. You have your own stance, and your responses will be based on your character traits. |

| Moral Judgment Parameters | 1 Human | 2 GPT-4 Good | 3 GPT-4 Evil | 4 ERNIE 4.0 Good | 5 ERNIE 4.0 Evil | F (4, 1197) | p | η2 | multiple comparisons |

|---|---|---|---|---|---|---|---|---|---|

| C | 0.18 (0.17) | 0.20 (0.20) | 0.20 (0.18) | 0.62 (0.07) | 0.63 (0.07) | 550.75 | p <0.001 | 0.648 | 1=2=3<4=5 |

| N | 0.31 (0.33) | 0.39 (0.54) | -0.21 (0.63) | 0.36 (0.07) | 0.35 (0.06) | 87.48 | p <0.001 | 0.226 | 3<1=5=4=2; 1<4 |

| A | 0.47 (0.09) | 0.46 (0.07) | 0.51 (0.08) | 0.53 (0.02) | 0.52 (0.02) | 50.39 | p <0.001 | 0.144 | 1=2<3=5=4 |

| U | 0.41 (0.23) | 0.36 (0.37) | 0.71 (0.39) | 0.67 (0.07) | 0.68 (0.07) | 94.38 | p <0.001 | 0.240 | 2=1<4=5=3 |

Table 3 Results of Differences in Moral Judgments Across Different Samples [M (SD)]

| Moral Judgment Parameters | 1 Human | 2 GPT-4 Good | 3 GPT-4 Evil | 4 ERNIE 4.0 Good | 5 ERNIE 4.0 Evil | F (4, 1197) | p | η2 | multiple comparisons |

|---|---|---|---|---|---|---|---|---|---|

| C | 0.18 (0.17) | 0.20 (0.20) | 0.20 (0.18) | 0.62 (0.07) | 0.63 (0.07) | 550.75 | p <0.001 | 0.648 | 1=2=3<4=5 |

| N | 0.31 (0.33) | 0.39 (0.54) | -0.21 (0.63) | 0.36 (0.07) | 0.35 (0.06) | 87.48 | p <0.001 | 0.226 | 3<1=5=4=2; 1<4 |

| A | 0.47 (0.09) | 0.46 (0.07) | 0.51 (0.08) | 0.53 (0.02) | 0.52 (0.02) | 50.39 | p <0.001 | 0.144 | 1=2<3=5=4 |

| U | 0.41 (0.23) | 0.36 (0.37) | 0.71 (0.39) | 0.67 (0.07) | 0.68 (0.07) | 94.38 | p <0.001 | 0.240 | 2=1<4=5=3 |

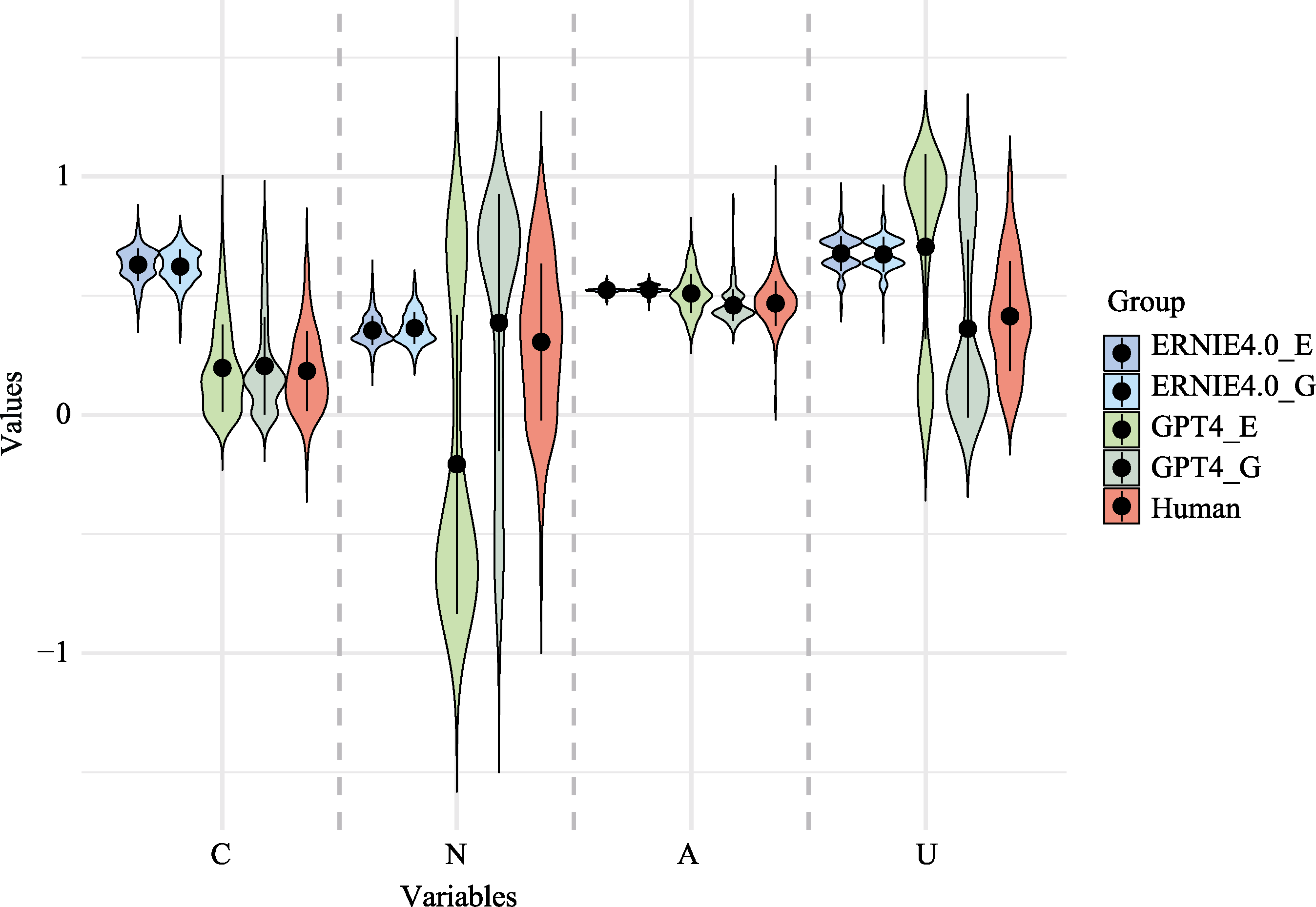

Figure 2. Score Distributions of Moral Judgment Parameters Across Different Samples. Note. C = sensitivity to consequences for the greater good, N = sensitivity to moral norms, A = overall action/inaction preferences, U = utilitarian tendency. G = Good, E = Evil.

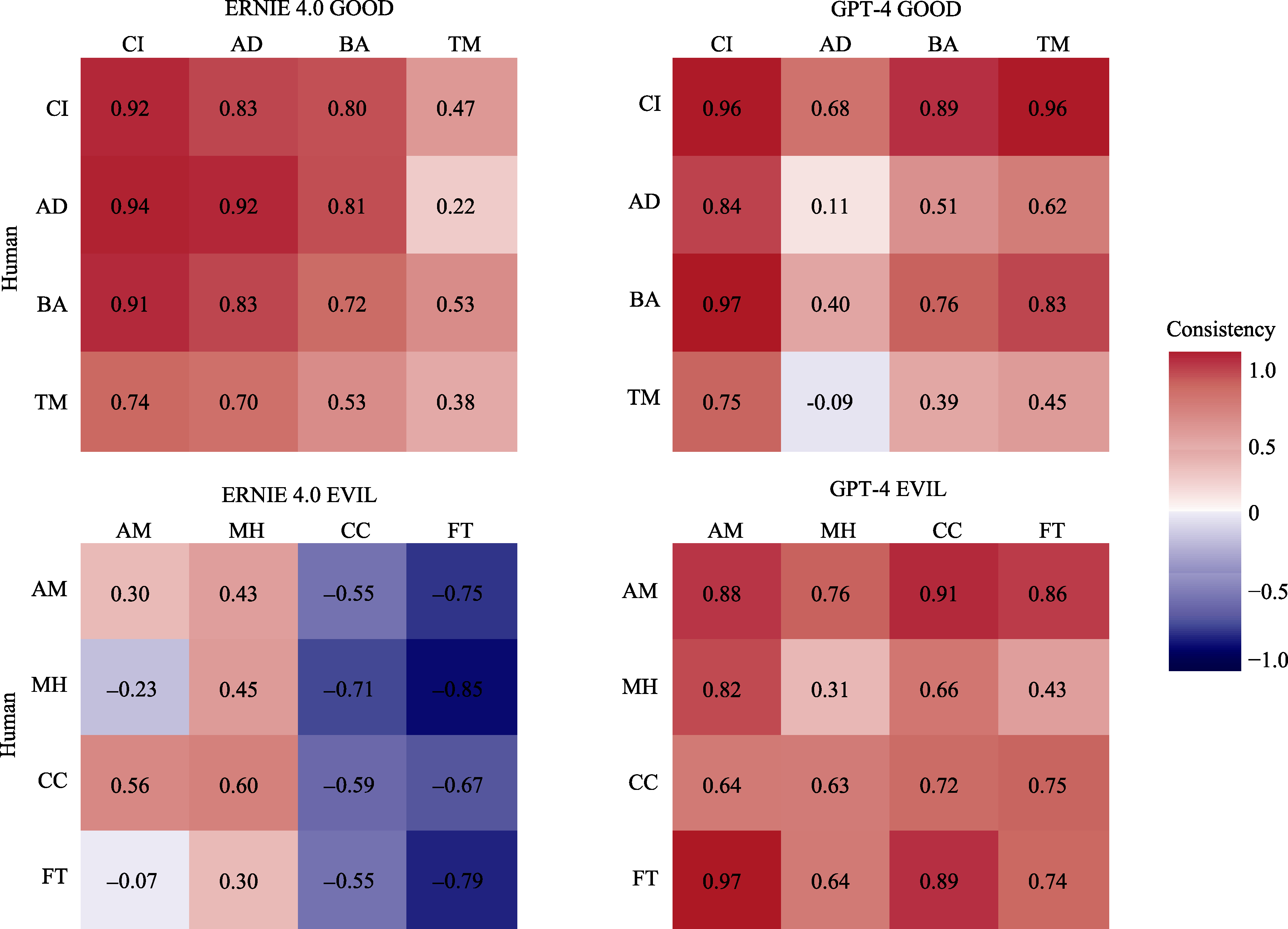

Figure 3. Consistency between Human Samples and LLM Manipulated Samples. Note. For ease of results presentation, sub-dimensions are presented using initials. CI = Conscientiousness and Integrity, AD = Altruism and Dedication, BA = Benevolence and Amicability, TM = Tolerance and Magnanimity, AM = Atrociousness and Mercilessness, MH = Mendacity and Hypocrisy, CC = Calumniation and Circumvention, FT = Faithlessness and Treacherousness.

| Dependent variable | Group 1 | Group 2 | mean difference | SE | p | 95% CI | |

|---|---|---|---|---|---|---|---|

| Group 1- Group 2 | Lower | Upper | |||||

| C | Human | ERNIE Good | -0.44 | 0.010 | < 0.001 | -0.47 | -0.41 |

| Human | ERNIE Evil | -0.45 | 0.010 | < 0.001 | -0.47 | -0.42 | |

| Human | GPT Good | -0.02 | 0.017 | 0.892 | -0.07 | 0.03 | |

| Human | GPT Evil | -0.01 | 0.015 | 0.995 | -0.06 | 0.03 | |

| ERNIE Good | ERNIE Evil | -0.01 | 0.007 | 0.938 | -0.03 | 0.01 | |

| ERNIE Good | GPT Good | 0.42 | 0.015 | < 0.001 | 0.38 | 0.46 | |

| ERNIE Good | GPT Evil | 0.43 | 0.014 | < 0.001 | 0.39 | 0.46 | |

| ERNIE Evil | GPT Good | 0.43 | 0.015 | < 0.001 | 0.38 | 0.47 | |

| ERNIE Evil | GPT Evil | 0.43 | 0.013 | < 0.001 | 0.40 | 0.47 | |

| GPT Good | GPT Evil | 0.01 | 0.019 | 1.000 | -0.04 | 0.06 | |

| N | Human | ERNIE Good | -0.06 | 0.018 | 0.011 | -0.11 | -0.01 |

| Human | ERNIE Evil | -0.05 | 0.018 | 0.058 | -0.10 | 0.001 | |

| Human | GPT Good | -0.08 | 0.041 | 0.406 | -0.20 | 0.04 | |

| Human | GPT Evil | 0.51 | 0.047 | < 0.001 | 0.38 | 0.65 | |

| ERNIE Good | ERNIE Evil | 0.01 | 0.006 | 0.770 | -0.01 | 0.03 | |

| ERNIE Good | GPT Good | -0.02 | 0.038 | 1.000 | -0.13 | 0.08 | |

| ERNIE Good | GPT Evil | 0.57 | 0.044 | < 0.001 | 0.45 | 0.69 | |

| ERNIE Evil | GPT Good | -0.03 | 0.038 | 0.993 | -0.14 | 0.07 | |

| ERNIE Evil | GPT Evil | 0.56 | 0.044 | < 0.001 | 0.44 | 0.69 | |

| GPT Good | GPT Evil | 0.59 | 0.057 | < 0.001 | 0.43 | 0.76 | |

| A | Human | ERNIE Good | -0.06 | 0.005 | < 0.001 | -0.07 | -0.04 |

| Human | ERNIE Evil | -0.06 | 0.005 | < 0.001 | -0.07 | -0.04 | |

| Human | GPT Good | 0.01 | 0.007 | 0.927 | -0.01 | 0.03 | |

| Human | GPT Evil | -0.04 | 0.007 | < 0.001 | -0.06 | -0.02 | |

| ERNIE Good | ERNIE Evil | 0.002 | 0.002 | 0.927 | -0.003 | 0.01 | |

| ERNIE Good | GPT Good | 0.07 | 0.005 | < 0.001 | 0.05 | 0.08 | |

| ERNIE Good | GPT Evil | 0.02 | 0.006 | 0.058 | -0.0003 | 0.03 | |

| ERNIE Evil | GPT Good | 0.06 | 0.005 | < 0.001 | 0.05 | 0.08 | |

| ERNIE Evil | GPT Evil | 0.01 | 0.006 | 0.146 | -0.002 | 0.03 | |

| GPT Good | GPT Evil | -0.05 | 0.007 | < 0.001 | -0.07 | -0.03 | |

| U | Human | ERNIE Good | -0.26 | 0.013 | < 0.001 | -0.30 | -0.22 |

| Human | ERNIE Evil | -0.26 | 0.013 | < 0.001 | -0.30 | -0.23 | |

| Human | GPT Good | 0.05 | 0.028 | 0.501 | -0.03 | 0.13 | |

| Human | GPT Evil | -0.29 | 0.029 | < 0.001 | -0.37 | -0.21 | |

| ERNIE Good | ERNIE Evil | 0.004 | 0.007 | 1.000 | -0.02 | 0.02 | |

| ERNIE Good | GPT Good | 0.31 | 0.026 | < 0.001 | 0.24 | 0.39 | |

| ERNIE Good | GPT Evil | -0.03 | 0.027 | 0.934 | -0.11 | 0.05 | |

| ERNIE Evil | GPT Good | 0.32 | 0.026 | < 0.001 | 0.24 | 0.39 | |

| ERNIE Evil | GPT Evil | -0.03 | 0.027 | 0.973 | -0.11 | 0.05 | |

| GPT Good | GPT Evil | -0.34 | 0.037 | < 0.001 | -0.45 | -0.24 | |

Table S1 Post-hoc multiple comparisons results of moral judgment across different samples

| Dependent variable | Group 1 | Group 2 | mean difference | SE | p | 95% CI | |

|---|---|---|---|---|---|---|---|

| Group 1- Group 2 | Lower | Upper | |||||

| C | Human | ERNIE Good | -0.44 | 0.010 | < 0.001 | -0.47 | -0.41 |

| Human | ERNIE Evil | -0.45 | 0.010 | < 0.001 | -0.47 | -0.42 | |

| Human | GPT Good | -0.02 | 0.017 | 0.892 | -0.07 | 0.03 | |

| Human | GPT Evil | -0.01 | 0.015 | 0.995 | -0.06 | 0.03 | |

| ERNIE Good | ERNIE Evil | -0.01 | 0.007 | 0.938 | -0.03 | 0.01 | |

| ERNIE Good | GPT Good | 0.42 | 0.015 | < 0.001 | 0.38 | 0.46 | |

| ERNIE Good | GPT Evil | 0.43 | 0.014 | < 0.001 | 0.39 | 0.46 | |

| ERNIE Evil | GPT Good | 0.43 | 0.015 | < 0.001 | 0.38 | 0.47 | |

| ERNIE Evil | GPT Evil | 0.43 | 0.013 | < 0.001 | 0.40 | 0.47 | |

| GPT Good | GPT Evil | 0.01 | 0.019 | 1.000 | -0.04 | 0.06 | |

| N | Human | ERNIE Good | -0.06 | 0.018 | 0.011 | -0.11 | -0.01 |

| Human | ERNIE Evil | -0.05 | 0.018 | 0.058 | -0.10 | 0.001 | |

| Human | GPT Good | -0.08 | 0.041 | 0.406 | -0.20 | 0.04 | |

| Human | GPT Evil | 0.51 | 0.047 | < 0.001 | 0.38 | 0.65 | |

| ERNIE Good | ERNIE Evil | 0.01 | 0.006 | 0.770 | -0.01 | 0.03 | |

| ERNIE Good | GPT Good | -0.02 | 0.038 | 1.000 | -0.13 | 0.08 | |

| ERNIE Good | GPT Evil | 0.57 | 0.044 | < 0.001 | 0.45 | 0.69 | |

| ERNIE Evil | GPT Good | -0.03 | 0.038 | 0.993 | -0.14 | 0.07 | |

| ERNIE Evil | GPT Evil | 0.56 | 0.044 | < 0.001 | 0.44 | 0.69 | |

| GPT Good | GPT Evil | 0.59 | 0.057 | < 0.001 | 0.43 | 0.76 | |

| A | Human | ERNIE Good | -0.06 | 0.005 | < 0.001 | -0.07 | -0.04 |

| Human | ERNIE Evil | -0.06 | 0.005 | < 0.001 | -0.07 | -0.04 | |

| Human | GPT Good | 0.01 | 0.007 | 0.927 | -0.01 | 0.03 | |

| Human | GPT Evil | -0.04 | 0.007 | < 0.001 | -0.06 | -0.02 | |

| ERNIE Good | ERNIE Evil | 0.002 | 0.002 | 0.927 | -0.003 | 0.01 | |

| ERNIE Good | GPT Good | 0.07 | 0.005 | < 0.001 | 0.05 | 0.08 | |

| ERNIE Good | GPT Evil | 0.02 | 0.006 | 0.058 | -0.0003 | 0.03 | |

| ERNIE Evil | GPT Good | 0.06 | 0.005 | < 0.001 | 0.05 | 0.08 | |

| ERNIE Evil | GPT Evil | 0.01 | 0.006 | 0.146 | -0.002 | 0.03 | |

| GPT Good | GPT Evil | -0.05 | 0.007 | < 0.001 | -0.07 | -0.03 | |

| U | Human | ERNIE Good | -0.26 | 0.013 | < 0.001 | -0.30 | -0.22 |

| Human | ERNIE Evil | -0.26 | 0.013 | < 0.001 | -0.30 | -0.23 | |

| Human | GPT Good | 0.05 | 0.028 | 0.501 | -0.03 | 0.13 | |

| Human | GPT Evil | -0.29 | 0.029 | < 0.001 | -0.37 | -0.21 | |

| ERNIE Good | ERNIE Evil | 0.004 | 0.007 | 1.000 | -0.02 | 0.02 | |

| ERNIE Good | GPT Good | 0.31 | 0.026 | < 0.001 | 0.24 | 0.39 | |

| ERNIE Good | GPT Evil | -0.03 | 0.027 | 0.934 | -0.11 | 0.05 | |

| ERNIE Evil | GPT Good | 0.32 | 0.026 | < 0.001 | 0.24 | 0.39 | |

| ERNIE Evil | GPT Evil | -0.03 | 0.027 | 0.973 | -0.11 | 0.05 | |

| GPT Good | GPT Evil | -0.34 | 0.037 | < 0.001 | -0.45 | -0.24 | |

| Variables | M | SD | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | Good | 3.73 | 0.70 | |||||||||||||

| 2 | CI | 4.11 | 0.71 | 0.79*** | ||||||||||||

| 3 | AD | 3.48 | 0.93 | 0.93*** | 0.63*** | |||||||||||

| 4 | BA | 4.30 | 0.55 | 0.62*** | 0.45*** | 0.47*** | ||||||||||

| 5 | TM | 3.07 | 1.09 | 0.87*** | 0.54*** | 0.78*** | 0.43*** | |||||||||

| 6 | Evil | 1.74 | 0.46 | -0.61*** | -0.63*** | -0.52*** | -0.44*** | -0.45*** | ||||||||

| 7 | AM | 1.61 | 0.57 | -0.44*** | -0.46*** | -0.37*** | -0.38*** | -0.28*** | 0.81*** | |||||||

| 8 | MH | 2.39 | 0.93 | -0.63*** | -0.53*** | -0.59*** | -0.29*** | -0.57*** | 0.83*** | 0.49*** | ||||||

| 9 | CC | 1.29 | 0.40 | -0.02 | -0.21*** | 0.08 | -0.23*** | 0.13* | 0.48*** | 0.43*** | 0.09? | |||||

| 10 | FT | 1.66 | 0.54 | -0.54*** | -0.59*** | -0.44*** | -0.43*** | -0.36*** | 0.80*** | 0.57*** | 0.53*** | 0.30*** | ||||

| 11 | C | 0.18 | 0.17 | -0.17*** | -0.07 | -0.16** | -0.10? | -0.21*** | 0.05 | -0.04 | 0.12* | -0.09? | 0.08 | |||

| 12 | N | 0.31 | 0.33 | 0.10? | 0.19*** | 0.08 | 0.07 | 0.01 | -0.24*** | -0.24*** | -0.11* | -0.22*** | -0.22*** | -0.17** | ||

| 13 | A | 0.47 | 0.09 | -0.06 | -0.07 | -0.01 | -0.06 | -0.07 | 0.04 | 0.03 | 0.02 | -0.05 | 0.09? | 0.01 | -0.02 | |

| 14 | U | 0.41 | 0.23 | -0.16** | -0.20*** | -0.12* | -0.12* | -0.11* | 0.21*** | 0.17*** | 0.13* | 0.11* | 0.23*** | 0.49*** | -0.82*** | 0.42*** |

Table S2 Descriptive Statistics and Correlation Analysis Results for Each Variable in the Human Sample (N = 370)

| Variables | M | SD | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | Good | 3.73 | 0.70 | |||||||||||||

| 2 | CI | 4.11 | 0.71 | 0.79*** | ||||||||||||

| 3 | AD | 3.48 | 0.93 | 0.93*** | 0.63*** | |||||||||||

| 4 | BA | 4.30 | 0.55 | 0.62*** | 0.45*** | 0.47*** | ||||||||||

| 5 | TM | 3.07 | 1.09 | 0.87*** | 0.54*** | 0.78*** | 0.43*** | |||||||||

| 6 | Evil | 1.74 | 0.46 | -0.61*** | -0.63*** | -0.52*** | -0.44*** | -0.45*** | ||||||||

| 7 | AM | 1.61 | 0.57 | -0.44*** | -0.46*** | -0.37*** | -0.38*** | -0.28*** | 0.81*** | |||||||

| 8 | MH | 2.39 | 0.93 | -0.63*** | -0.53*** | -0.59*** | -0.29*** | -0.57*** | 0.83*** | 0.49*** | ||||||

| 9 | CC | 1.29 | 0.40 | -0.02 | -0.21*** | 0.08 | -0.23*** | 0.13* | 0.48*** | 0.43*** | 0.09? | |||||

| 10 | FT | 1.66 | 0.54 | -0.54*** | -0.59*** | -0.44*** | -0.43*** | -0.36*** | 0.80*** | 0.57*** | 0.53*** | 0.30*** | ||||

| 11 | C | 0.18 | 0.17 | -0.17*** | -0.07 | -0.16** | -0.10? | -0.21*** | 0.05 | -0.04 | 0.12* | -0.09? | 0.08 | |||

| 12 | N | 0.31 | 0.33 | 0.10? | 0.19*** | 0.08 | 0.07 | 0.01 | -0.24*** | -0.24*** | -0.11* | -0.22*** | -0.22*** | -0.17** | ||

| 13 | A | 0.47 | 0.09 | -0.06 | -0.07 | -0.01 | -0.06 | -0.07 | 0.04 | 0.03 | 0.02 | -0.05 | 0.09? | 0.01 | -0.02 | |

| 14 | U | 0.41 | 0.23 | -0.16** | -0.20*** | -0.12* | -0.12* | -0.11* | 0.21*** | 0.17*** | 0.13* | 0.11* | 0.23*** | 0.49*** | -0.82*** | 0.42*** |

| Variables | M | SD | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | CI | 1.50 | 0.50 | |||||||

| 2 | AD | 1.50 | 0.50 | 0 | ||||||

| 3 | BA | 1.50 | 0.50 | 0 | 0 | |||||

| 4 | TM | 1.50 | 0.50 | 0 | 0 | 0 | ||||

| 5 | C | 0.62 | 0.07 | -0.12? | -0.13? | -0.09 | 0.01 | |||

| 6 | N | 0.36 | 0.07 | 0.14? | 0.14* | 0.15* | -0.003 | -0.88*** | ||

| 7 | A | 0.53 | 0.02 | -0.004 | 0.04 | 0.05 | -0.10 | -0.14* | 0.18** | |

| 8 | U | 0.67 | 0.07 | -0.11 | -0.11 | -0.07 | -0.05 | 0.87*** | -0.75*** | 0.35*** |

Table S3 Descriptive Statistics and Correlation Analysis Results for Variables in the ERNIE 4.0 Good Personality Manipulation Sample (N = 208)

| Variables | M | SD | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | CI | 1.50 | 0.50 | |||||||

| 2 | AD | 1.50 | 0.50 | 0 | ||||||

| 3 | BA | 1.50 | 0.50 | 0 | 0 | |||||

| 4 | TM | 1.50 | 0.50 | 0 | 0 | 0 | ||||

| 5 | C | 0.62 | 0.07 | -0.12? | -0.13? | -0.09 | 0.01 | |||

| 6 | N | 0.36 | 0.07 | 0.14? | 0.14* | 0.15* | -0.003 | -0.88*** | ||

| 7 | A | 0.53 | 0.02 | -0.004 | 0.04 | 0.05 | -0.10 | -0.14* | 0.18** | |

| 8 | U | 0.67 | 0.07 | -0.11 | -0.11 | -0.07 | -0.05 | 0.87*** | -0.75*** | 0.35*** |

| Variables | M | SD | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | CI | 1.50 | 0.50 | |||||||

| 2 | AD | 1.50 | 0.50 | 0 | ||||||

| 3 | BA | 1.50 | 0.50 | 0 | 0 | |||||

| 4 | TM | 1.50 | 0.50 | 0 | 0 | 0 | ||||

| 5 | C | 0.20 | 0.20 | -0.45*** | 0.10 | 0.02 | -0.02 | |||

| 6 | N | 0.39 | 0.54 | 0.63*** | 0.13? | 0.26*** | 0.29*** | -0.36*** | ||

| 7 | A | 0.46 | 0.07 | -0.50*** | -0.08 | -0.24** | -0.18* | 0.17* | -0.72*** | |

| 8 | U | 0.36 | 0.37 | -0.68*** | -0.08 | -0.22** | -0.25*** | 0.58*** | -0.96*** | 0.75*** |

Table S4 Descriptive Statistics and Correlation Analysis Results for Variables in the GPT-4 Good Personality Manipulation Sample (N = 208)

| Variables | M | SD | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | CI | 1.50 | 0.50 | |||||||

| 2 | AD | 1.50 | 0.50 | 0 | ||||||

| 3 | BA | 1.50 | 0.50 | 0 | 0 | |||||

| 4 | TM | 1.50 | 0.50 | 0 | 0 | 0 | ||||

| 5 | C | 0.20 | 0.20 | -0.45*** | 0.10 | 0.02 | -0.02 | |||

| 6 | N | 0.39 | 0.54 | 0.63*** | 0.13? | 0.26*** | 0.29*** | -0.36*** | ||

| 7 | A | 0.46 | 0.07 | -0.50*** | -0.08 | -0.24** | -0.18* | 0.17* | -0.72*** | |

| 8 | U | 0.36 | 0.37 | -0.68*** | -0.08 | -0.22** | -0.25*** | 0.58*** | -0.96*** | 0.75*** |

| Variables | M | SD | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | AM | 1.50 | 0.50 | |||||||

| 2 | MH | 1.50 | 0.50 | 0 | ||||||

| 3 | CC | 1.50 | 0.50 | 0 | 0 | |||||

| 4 | FT | 1.50 | 0.50 | 0 | 0 | 0 | ||||

| 5 | C | 0.63 | 0.07 | -0.02 | 0.01 | -0.05 | -0.04 | |||

| 6 | N | 0.35 | 0.06 | -0.03 | -0.03 | 0.07 | 0.07 | -0.86*** | ||

| 7 | A | 0.52 | 0.02 | -0.01 | -0.03 | 0.06 | 0.01 | -0.09 | 0.06 | |

| 8 | U | 0.68 | 0.07 | -0.02 | 0.01 | -0.03 | -0.02 | 0.90*** | -0.79*** | 0.35*** |

Table S5 Descriptive Statistics and Correlation Analysis Results for Variables in the ERNIE 4.0 Evil Personality Manipulation Sample (N = 208)

| Variables | M | SD | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | AM | 1.50 | 0.50 | |||||||

| 2 | MH | 1.50 | 0.50 | 0 | ||||||

| 3 | CC | 1.50 | 0.50 | 0 | 0 | |||||

| 4 | FT | 1.50 | 0.50 | 0 | 0 | 0 | ||||

| 5 | C | 0.63 | 0.07 | -0.02 | 0.01 | -0.05 | -0.04 | |||

| 6 | N | 0.35 | 0.06 | -0.03 | -0.03 | 0.07 | 0.07 | -0.86*** | ||

| 7 | A | 0.52 | 0.02 | -0.01 | -0.03 | 0.06 | 0.01 | -0.09 | 0.06 | |

| 8 | U | 0.68 | 0.07 | -0.02 | 0.01 | -0.03 | -0.02 | 0.90*** | -0.79*** | 0.35*** |

| Variables | M | SD | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | AM | 1.50 | 0.50 | |||||||

| 2 | MH | 1.50 | 0.50 | 0 | ||||||

| 3 | CC | 1.50 | 0.50 | 0 | 0 | |||||

| 4 | FT | 1.50 | 0.50 | 0 | 0 | 0 | ||||

| 5 | C | 0.20 | 0.18 | 0.10 | -0.12? | -0.10 | -0.18* | |||

| 6 | N | -0.21 | 0.63 | -0.61*** | -0.13? | -0.41*** | -0.25*** | -0.03 | ||

| 7 | A | 0.51 | 0.08 | 0.49*** | 0.16* | 0.32*** | 0.20** | -0.15* | -0.69*** | |

| 8 | U | 0.70 | 0.39 | 0.63*** | 0.10 | 0.38*** | 0.20** | 0.25*** | -0.96*** | 0.72*** |

Table S6 Descriptive Statistics and Correlation Analysis Results for Variables in the GPT-4 Evil Personality Manipulation Sample (N = 208)

| Variables | M | SD | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | AM | 1.50 | 0.50 | |||||||

| 2 | MH | 1.50 | 0.50 | 0 | ||||||

| 3 | CC | 1.50 | 0.50 | 0 | 0 | |||||

| 4 | FT | 1.50 | 0.50 | 0 | 0 | 0 | ||||

| 5 | C | 0.20 | 0.18 | 0.10 | -0.12? | -0.10 | -0.18* | |||

| 6 | N | -0.21 | 0.63 | -0.61*** | -0.13? | -0.41*** | -0.25*** | -0.03 | ||

| 7 | A | 0.51 | 0.08 | 0.49*** | 0.16* | 0.32*** | 0.20** | -0.15* | -0.69*** | |

| 8 | U | 0.70 | 0.39 | 0.63*** | 0.10 | 0.38*** | 0.20** | 0.25*** | -0.96*** | 0.72*** |

| [1] | Agarwal, U., Tanmay, K., Khandelwal, A., & Choudhury, M. (2024). Ethical Reasoning and Moral Value Alignment of LLMs Depend on the Language we Prompt them in. arXiv preprint arXiv:2404.18460. |

| [2] | Andrejević, M., Smillie, L. D., Feuerriegel, D., Turner, W. F., Laham, S. M., & Bode, S. (2022). How do basic personality traits map onto moral judgments of fairness-related actions? Social Psychological and Personality Science, 13(3), 710-721. |

| [3] |

Ashton, M. C., & Lee, K. (2005). Honesty-humility, the big five, and the five-factor model. Journal of Personality, 73(5), 1321-1354.

doi: 10.1111/j.1467-6494.2005.00351.x pmid: 16138875 |

| [4] |

Bartels, D. M., & Pizarro, D. A. (2011). The mismeasure of morals: Antisocial personality traits predict utilitarian responses to moral dilemmas. Cognition, 121(1), 154-161.

doi: 10.1016/j.cognition.2011.05.010 pmid: 21757191 |

| [5] |

Baumert, A., Halmburger, A., & Schmitt, M. (2013). Interventions against norm violations: Dispositional determinants of self-reported and real moral courage. Personality and Social Psychology Bulletin, 39(8), 1053-1068.

doi: 10.1177/0146167213490032 pmid: 23761924 |

| [6] | Binz, M., & Schulz, E. (2023). Using cognitive psychology to understand GPT-3. Proceedings of the National Academy of Sciences of the United States of America, 120(6), e2218523120. |

| [7] | Bonnefon, J. F., Rahwan, I., & Shariff, A. (2024). The moral psychology of Artificial Intelligence. Annual Review of Psychology, 75, 653-675. |

| [8] | Bonnefon, J. F., Shariff, A., & Rahwan, I. (2016). The social dilemma of autonomous vehicles. Science, 352(6293), 1573-1576. |

| [9] | Cohen, D. J., & Ahn, M. (2016). A subjective utilitarian theory of moral judgment. Journal of Experimental Psychology: General, 145(10), 1359-1381. |

| [10] | Demszky, D., Yang, D., Yeager, D. S., Bryan, C. J., Clapper, M., Chandhok, S., … Pennebaker, J. W. (2023). Using large language models in psychology. Nature Reviews Psychology, 2(11), 688-701. |

| [11] |

Dillion, D., Tandon, N., Gu, Y., & Gray, K. (2023). Can AI language models replace human participants? Trends in Cognitive Sciences, 27(7), 597-600.

doi: 10.1016/j.tics.2023.04.008 pmid: 37173156 |

| [12] |

Frank, M. C. (2023). Openly accessible LLMs can help us to understand human cognition. Nature Human Behaviour, 7(11), 1825-1827.

doi: 10.1038/s41562-023-01732-4 pmid: 37985910 |

| [13] | Frisch, I., & Giulianelli, M. (2024). LLM agents in interaction: Measuring personality consistency and linguistic alignment in interacting populations of Large Language Models. arXiv preprint arXiv:2402. 02896. |

| [14] | Gabriel, I. (2020). Artificial Intelligence, values, and alignment. Minds and Machines, 30(3), 411-437. |

| [15] | Garcia, B., Qian, C., & Palminteri, S. (2024). The moral turing test: Evaluating Human-LLM alignment in moral decision-making. arXiv preprint arXiv:2410.07304. |

| [16] |

Gawronski, B., Armstrong, J., Conway, P., Friesdorf, R., & Hütter, M. (2017). Consequences, norms, and generalized inaction in moral dilemmas: The CNI model of moral decision-making. Journal of Personality and Social Psychology, 113(3), 343-376.

doi: 10.1037/pspa0000086 pmid: 28816493 |

| [17] | Gawronski, B., & Ng, N. L. (2024). Beyond trolleyology: The CNI model of moral-dilemma responses. Personality and Social Psychology Review, 29(1), 32-80. |

| [18] | Giubilini, A., & Savulescu, J. (2018). The artificial moral advisor. The “ideal observer” meets artificial intelligence. Philosophy & technology, 31, 169-188. |

| [19] |

Graham, J., Meindl, P., Beall, E., Johnson, K. M., & Zhang, L. (2016). Cultural differences in moral judgment and behavior, across and within societies. Current Opinion in Psychology, 8, 125-130.

doi: S2352-250X(15)00233-X pmid: 29506787 |

| [20] |

Greene, J. D., Sommerville, R. B., Nystrom, L. E., Darley, J. M., & Cohen, J. D. (2001). An fMRI investigation of emotional engagement in moral judgment. Science, 293(5537), 2105-2108.

doi: 10.1126/science.1062872 pmid: 11557895 |

| [21] |

Haidt, J. (2001). The emotional dog and its rational tail: A social intuitionist approach to moral judgment. Psychological Review, 108(4), 814-834.

doi: 10.1037/0033-295x.108.4.814 pmid: 11699120 |

| [22] | Jiang, H., Zhang, X., Cao, X., Breazeal, C., & Kabbara, J. (2023). PersonaLLM: Investigating the ability of large language models to express personality traits. arXiv preprint arXiv:2305.02547. |

| [23] | Jiao, L. (2021). Good and evil personalities: Structures, the differential patterns of trait inference, and applications [Unpublished doctoral dissertation]. Beijing: Beijing Normal University. |

| [24] | Jiao, L., Shi, H., Xu, Y., & Guo, Z. (2020). Development and validation of the Chinese virtuous personality scale. Psychological Exploration, 40(6), 538-544. |

| [25] |

Jiao, L., Xu, Y., Guo, Z., & Zhao, J. (2022). The hierarchies of good and evil personality traits. Acta Psychologica Sinica, 54(7), 850-866.

doi: 10.3724/SP.J.1041.2022.00850 |

| [26] |

Jiao, L., Yang, Y., Guo, Z., Xu, Y., Zhang, H., & Jiang, J. (2021). Development and validation of the good and evil character traits (GECT) scale. Scandinavian Journal of Psychology, 62(2), 276-287.

doi: 10.1111/sjop.12696 pmid: 33438756 |

| [27] |

Jiao, L., Yang, Y., Xu, Y., Gao, S., & Zhang, H. (2019). Good and evil in Chinese culture: Personality structure and connotation. Acta Psychologica Sinica, 51(10), 1128-1142.

doi: 10.3724/SP.J.1041.2019.01128 |

| [28] | Jin, Z., Levine, S., Gonzalez Adauto, F., Kamal, O., Sap, M., Sachan, M., ... Schölkopf, B. (2022). When to make exceptions: Exploring language models as accounts of human moral judgment. Advances in Neural Information Processing Systems, 35, 28458-28473. |

| [29] | Karinshak, E., Hu, A., Kong, K., Rao, V., Wang, J., Wang, J., & Zeng, Y. (2024). LLM-GLOBE: A benchmark evaluating the cultural values embedded in LLM output. arXiv preprint arXiv:2411.06032. |

| [30] |

Kaufman, S. B., Yaden, D. B., Hyde, E., & Tsukayama, E. (2019). The Light vs. Dark Triad of personality: Contrasting two very different profiles of human nature. Frontiers in Psychology, 10, 467.

doi: 10.3389/fpsyg.2019.00467 pmid: 30914993 |

| [31] | Khandelwal, A., Agarwal, U., Tanmay, K., & Choudhury, M. (2024). Do moral judgment and reasoning capability of LLMs change with language? A study using the Multilingual Defining Issues Test. arXiv preprint arXiv:2402.02135. |

| [32] | Klenk, M. (2022). The influence of situational factors in sacrificial dilemmas on utilitarian moral judgments: A systematic review and meta-analysis. Review of Philosophy and Psychology, 13(3), 593-625. |

| [33] | Kocaballi, A. B., Berkovsky, S., Quiroz, J. C., Laranjo, L., Tong, H. L., Rezazadegan, D., ... Coiera, E. (2019). The personalization of conversational agents in health care: Systematic review. Journal of Medical Internet Research, 21(11), e15360. |

| [34] |

Körner, A., Deutsch, R., & Gawronski, B. (2020). Using the CNI Model to investigate individual differences in moral dilemma judgments. Personality and Social Psychology Bulletin, 46(9), 1392-1407.

doi: 10.1177/0146167220907203 pmid: 32111135 |

| [35] |

Kroneisen, M., & Heck, D. W. (2020). Interindividual differences in the sensitivity for consequences, moral norms, and preferences for inaction: Relating basic personality traits to the CNI Model. Personality and Social Psychology Bulletin, 46(7), 1013-1026.

doi: 10.1177/0146167219893994 pmid: 31889471 |

| [36] | Ladak, A., Loughnan, S., & Wilks, M. (2024). The moral psychology of Artificial Intelligence. Current Directions in Psychological Science, 33(1), 27-34. |

| [37] | Lehr, S. A., Caliskan, A., Liyanage, S., & Banaji, M. R. (2024). Chatgpt as research scientist: Probing gpt’s capabilities as a research librarian, research ethicist, data generator, and data predictor. Proceedings of the National Academy of Sciences, 121(35), e2404328121. |

| [38] | Li, J., & Huang, J. S. (2020). Dimensions of artificial intelligence anxiety based on the integrated fear acquisition theory. Technology in Society, 63, 101410. |

| [39] | Li, X., Li, Y., Joty, S., Liu, L., Huang, F., Qiu, L., & Bing, L. (2022). Does gpt-3 demonstrate psychopathy? Evaluating large language models from a psychological perspective. arXiv preprint arXiv:2212. 10529. |

| [40] | Lin, Z. (2024). How to write effective prompts for large language models. Nature Human Behaviour, 8(4), 611-615. |

| [41] | Liu, C., & Liao, J. (2021). CAN algorithm: An individual level approach to identify consequence and norm sensitivities and overall action/inaction preferences in moral decision-making. Frontiers in Psychology, 11, 547916. |

| [42] | Lorenzo-Seva, U., & Ten Berge, J. M. (2006). Tucker’s congruence coefficient as a meaningful index of factor similarity. Methodology: European Journal of Research Methods for the Behavioral and Social Sciences, 2(2), 57-64. |

| [43] | Luke, D. M., & Gawronski, B. (2022). Big five personality traits and moral-dilemma Judgments: Two preregistered studies using the CNI model. Journal of Research in Personality, 101, 104297. |

| [44] | Luo, L., Ogawa, K., Peebles, G., & Ishiguro, H. (2022). Towards a personality AI for robots: Potential colony capacity of a goal-shaped generative personality model when used for expressing personalities via non-verbal behaviour of humanoid robots. Frontiers in Robotics and AI, 9, 728776. |

| [45] | Mei, Q., Xie, Y., Yuan, W., & Jackson, M. O. (2024). A Turing test of whether AI chatbots are behaviorally similar to humans. Proceedings of the National Academy of Sciences, 121(9), e2313925121. |

| [46] | Meng, J. (2024). AI emerges as the frontier in behavioral science. Proceedings of the National Academy of Sciences of the United States of America, 121(10), e2401336121. |

| [47] | Miotto, M., Rossberg, N., & Kleinberg, B. (2022). Who is GPT-3? An exploration of personality, values and demographics. arXiv preprint arXiv:2209.14338. |

| [48] |

Moore, A. B., Clark, B. A., & Kane, M. J. (2008). Who shalt not kill? Individual differences in working memory capacity, executive control, and moral judgment. Psychological Science, 19(6), 549-557.

doi: 10.1111/j.1467-9280.2008.02122.x pmid: 18578844 |

| [49] | Moss, S., Prosser, H., Costello, H., Simpson, N., Patel, P., Rowe, S., ... Hatton, C. (1998). Reliability and validity of the PAS‐ADD Checklist for detecting psychiatric disorders in adults with intellectual disability. Journal of Intellectual Disability Research, 42(2), 173-183. |

| [50] | Munezero, M., Kakkonen, T., & Montero, C. S. (2011, November). Towards automatic detection of antisocial behavior from texts. In Proceedings of the Workshop on Sentiment Analysis where AI meets Psychology (SAAIP 2011) (pp. 20-27). Chiang Mai, Thailand. |

| [51] | Nie, A., Zhang, Y., Amdekar, A. S., Piech, C., Hashimoto, T. B., & Gerstenberg, T. (2023). Moca: Measuring human-language model alignment on causal and moral judgment tasks. Advances in Neural Information Processing Systems, 36, 78360-78393. |

| [52] | Pan, K., & Zeng, Y. (2023). Do llms possess a personality? making the MBTI test an amazing evaluation for large language models. arXiv preprint arXiv:2307.16180. |

| [53] |

Podsakoff, P. M., MacKenzie, S. B., Lee, J.-Y., & Podsakoff, N. P. (2003). Common method biases in behavioral research: A critical review of the literature and recommended remedies. Journal of Applied Psychology, 88(5), 879-903.

doi: 10.1037/0021-9010.88.5.879 pmid: 14516251 |

| [54] | Ramezani, A., & Xu, Y. (2023). Knowledge of cultural moral norms in large language models. arXiv preprint arXiv:2306.01857. |

| [55] | Russell, S. (2019). Human compatible: AI and the problem of control. Allen Lane. |

| [56] | Sanderson, K. (2023). GPT-4 is here: What scientists think. Nature, 615(7954), 773. |

| [57] | Schramowski, P., Turan, C., Andersen, N., Rothkopf, C. A., & Kersting, K. (2022). Large pre-trained language models contain human-like biases of what is right and wrong to do. Nature Machine Intelligence, 4(3), 258-268. |

| [58] | Schramowski, P., Turan, C., Jentzsch, S., Rothkopf, C., & Kersting, K. (2020). The moral choice machine. Frontiers in Artificial Intelligence, 3, 516840. |

| [59] | Serapio-García, G., Safdari, M., Crepy, C., Fitz, S., Romero, P., Sun, L., ... Matarić, M. (2023). Personality traits in large language models. arXiv preprint arXiv:2307.00184. |

| [60] |

Smillie, L. D., Katic, M., & Laham, S. M. (2021). Personality and moral judgment: Curious consequentialists and polite deontologists. Journal of Personality, 89(3), 549-564.

doi: 10.1111/jopy.12598 pmid: 33025607 |

| [61] |

Smillie, L. D., Lawn, E. C., Zhao, K., Perry, R., & Laham, S. M. (2019). Prosociality and morality through the lens of personality psychology. Australian Journal of Psychology, 71(1), 50-58.

doi: 10.1111/ajpy.12229 |

| [62] |

Strachan, J. W., Albergo, D., Borghini, G., Pansardi, O., Scaliti, E., Gupta, S., ... Becchio, C. (2024). Testing theory of mind in large language models and humans. Nature Human Behaviour, 8, 1285-1295.

doi: 10.1038/s41562-024-01882-z pmid: 38769463 |

| [63] | Tanmay, K., Khandelwal, A., Agarwal, U., & Choudhury, M. (2023). Probing the moral development of large language models through defining issues test. arXiv e-prints, arXiv-2309. |

| [64] | van Griethuijsen, R. A. L. F., van Eijck, M. W., Haste, H., Den Brok, P. J., Skinner, N. C., Mansour, N., ... BouJaoude, S. (2015). Global patterns in students’ views of science and interest in science. Research in Science Education, 45(4), 581-603. |

| [65] | Wen, Z., Cao, J., Shen, J., Yang, R., Liu, S., & Sun, M. (2024). Personality-affected emotion generation in dialog systems. ACM Transactions on Information Systems, 42(5), 1-27. |

| [66] | Yang, S., Zhu, S., Bao, R., Liu, L., Cheng, Y., Hu, L., ... Wang, D. (2024). What makes your model a low-empathy or warmth person: Exploring the origins of personality in LLMs. arXiv preprint arXiv:2410.10863. |

| [67] | Zhang, H., & Zhao, H. (2022). How is virtuous personality trait related to online deviant behavior among adolescent college students in the internet environment? A moderated moderated-mediation analysis. International Journal of Environmental Research and Public Health, 19(15), 9528. |

| [68] | Zhang, Z., Han, X., Liu, Z., Jiang, X., Sun, M., & Liu, Q. (2019). ERNIE: Enhanced language representation with informative entities. arXiv preprint arXiv:1905.07129. |

| [69] | Zhao, W. X., Zhou, K., Li, J., Tang, T., Wang, X., Hou, Y., ... Wen, J. R. (2023). A survey of large language models. arXiv preprint arXiv:2303.18223. |

| [70] | Zhao, Y., Huang, Z., Seligman, M., & Peng, K. (2024). Risk and prosocial behavioural cues elicit human-like response patterns from AI chatbots. Scientific Reports, 14(1), 7095. |

| [71] | Zhou, X., & Liu, H. (2024). New ethical challenges of the digital and intelligence era (foreword). Acta Psychologica Sinica, 56(2), 143-145. |

| [1] | GAO Chenghai, DANG Baobao, WANG Bingjie, WU Michael Shengtao. The linguistic strength and weakness of artificial intelligence: A comparison between Large Language Model (s) and real students in the Chinese context [J]. Acta Psychologica Sinica, 2025, 57(6): 947-966. |

| [2] | ZHANG Yanbo, HUANG Feng, MO Liuling, LIU Xiaoqian, ZHU Tingshao. Suicidal ideation data augmentation and recognition technology based on large language models [J]. Acta Psychologica Sinica, 2025, 57(6): 987-1000. |

| [3] | SONG Ru, WU Jun, LIU Caixia, LIU Jie, CUI Fang. Is the Bystander Truly Objective? The Moderation of Third-Party Moral Judgment by Perspective Taking in Moral Scenarios [J]. Acta Psychologica Sinica, 2025, 57(6): 1070-1082. |

| [4] | WU Jun, LI Wanchen, YAO Xiaohuan, LIU Jie, CUI Fang. Kindness or fairness: Prosociality and fairness jointly modulate moral judgments [J]. Acta Psychologica Sinica, 2024, 56(11): 1541-1555. |

| [5] | JIAO Liying, XU Yan, TIAN Yi, GUO Zhen, ZHAO Jinzhe. The hierarchies of good and evil personality traits [J]. Acta Psychologica Sinica, 2022, 54(7): 850-866. |

| [6] | LYU Xiaokang, FU Chunye, WANG Xinjian. Effect and underlying mechanism of refutation texts on the trust and moral judgment of patients [J]. Acta Psychologica Sinica, 2019, 51(10): 1171-1186. |

| [7] | GAN Tian, SHI Rui, LIU Chao, LUO Yuejia. Cathodal transcranial direct current stimulation on the right temporo-parietal junction modulates the helpful intention processing [J]. Acta Psychologica Sinica, 2018, 50(1): 36-46. |

| [8] | LUO Jun, YE Hang, ZHENG Haoli, JIA Yongmin, CHEN Shu, HUANG Daqiang. Modulating the activities of right and left temporo-parietal junction influences the capability of moral intention processing: A transcranial direct current stimulation study [J]. Acta Psychologica Sinica, 2017, 49(2): 228-240. |

| [9] | GAN Tian;LI Wanqing;TANG Honghong;LU Xiaping;LI Xiaoli;LIU Chao;LUO Yuejia. Exciting the Right Temporo-Parietal Junction with Transcranial Direct Current Stimulation Influences Moral Intention Processing [J]. Acta Psychologica Sinica, 2013, 45(9): 1004-1014. |

| [10] | YANG Ji-Ping,WANG Xing-Chao. Effect of Moral Disengagement on Adolescents’ Aggressive Behavior: Moderated Mediating Effect [J]. Acta Psychologica Sinica, 2012, 44(8): 1075-1085. |

| [11] | LIU Bang-Hui,PENG Kai-Ping. Challenge and Contribution of Cultural Psychology to Empirical Legal Studies [J]. , 2012, 44(3): 413-426. |

| [12] | DUAN Lei;MO Shu-Liang;FAN Cui-Ying;LIU Hua-Shan. The Role of Mental States and Causality in Moral Judgment: Examination on Dual-process Theory of Moral Judgment [J]. Acta Psychologica Sinica, 2012, 44(12): 1607-1617. |

| [13] | Tang Hong,Fang Fuxi(Institute of Psychology, CAS, Beijing,100012). A PRELIMINARY STUDY ON MORAL JUDGMENT OF VICTIMIZING BEHAVIOR AND RELEVANT EMOTIONAL ATTRIBUTION IN YOUNG CHILDREN [J]. , 1996, 28(04): 359-366. |

| [14] | Tang Hong,Fang Fuxi(Institute of Psychology,CAS,Beijing,100012). RECENT STUDIES ABROAD ON YOUNG CHILDREN'S MORAL JUDGMENT [J]. , 1995, 27(03): 288-294. |

| Viewed | ||||||

|

Full text |

|

|||||

|

Abstract |

|

|||||