1 引言

共同方法变异(common method variance)是社会科学实证研究中被反复提及的重要方法学问题。任何变量都会带有一些由特定测量方法引起的系统变异即方法变异, 如果两个变量用同一方法测量或测量方法有某些共同之处(例如数据来自同一受测者), 就会共享一部分方法变异, 形成共同方法变异(Podsakoff, MacKenzie, Lee, & Podsakoff, 2003; Spector & Brannick, 2010; 熊红星, 张璟, 郑雪, 2013), 进而造成构念的信度、效度估计偏差和构念间观测相关系数的估计偏差(MacKenzie & Podsakoff, 2012; Podsakoff, MacKenzie, & Podsakoff, 2012; 熊红星, 张璟, 叶宝娟, 郑雪, 孙配贞, 2012)。共同方法偏差(common method bias)是由此衍生出的概念, 指观测相关系数偏离真实相关系数的程度, 多数情况下表现为观测相关系数的膨胀或高估, 有时可能引起假阳性结果, 导致错误的因果关系推论(Doty & Glick, 1998; Fuller, Simmering, Atinc, Atinc, & Babin, 2016; Min, Park, & Kim, 2016)。学界对共同方法变异的担忧源于它为构念间的相关性提供了研究假设之外的替代解释, 构念间的关系若大部分归于共同方法的虚假效应和人为假象(artifact), 无疑会为逻辑网络(nomological network)的完善和理论的建构带来灾难性后果(Reio, 2010)。

在心理学、组织管理等行为科学领域, 共同方法变异是一个敏感而微妙的话题。其敏感之处在于, 它严重威胁研究结论的可靠性, 更与论文能否发表息息相关; 其微妙之处在于, 学界对于它究竟是“致命瘟疫”还是“都市传说1(1 英文为urban legend, 指人们耳熟能详并信以为真的言论, 但真实性不能保证。)”的热烈讨论已持续了60年, 但至今仍未达成共识(Doty & Glick, 1998; Podsakoff et al., 2012; Richardson, Simmering, & Sturman, 2009; Spector, 2006), 呈两派对立之势:“批判派”坚称, 共同方法变异带来了巨大的效度风险, 使很多研究结果疑点重重; “辩护派”则主张, 这些责难实属夸大其词。虽然纷争不止, 但大多数期刊和审稿人都将共同方法变异列为影响论文质量的重要因素, 管理学期刊的态度尤其严厉, 有明显共同方法变异顾虑的稿件常被拒审。有学者统计, 早在1998至2003年间, 4份国外权威管理学期刊发表的871篇实证论文中仅有36篇(4.13%)使用了单一来源数据(彭台光, 高月慈, 林钲棽, 2006); 甚至很多国外期刊编委的稿件也曾因共同方法变异问题被拒绝(Pace, 2010)。

在国内, 自周浩和龙立荣(2004)介绍了共同方法偏差的统计检验和控制方法后, 学界开始接触和关注这一问题。随着多变量统计技术的成熟和共同方法变异在论文评审中权重的增加, 研究者纷纷采用程序和统计手段加以应对。当前, 共同方法偏差检测被认为是问卷数据建模的奠基工程之一(温忠麟, 黄彬彬, 汤丹丹, 2018), 《心理学报》在2018年3月更新的论文自检报告中也强调管理、临床、人格、社会等领域的问卷类研究须详细论述共同方法偏差的检测和控制手段, 这部分日益成为规范化内容。然而, 在负面态度占据上风的严峻形势下, 如临大敌的研究者不得不竭力迎合期刊的高标准严要求, 试图以各种“实用”的手段打消审稿人对自己论文的怀疑, 对基本理论却缺乏深究, 助长了概念原理上的认识误区、应对实践中的方法误用和学术评价中的价值误判; 同时, 对共同方法变异威胁的先定假设有意无意地影响着研究者和审稿人对共同方法变异的处理和评论(Richardson et al., 2009), 如果不能从正反两面认识这一问题的全貌, 只是浅尝辄止或仪式化地做一个Harman单因子检验, 科研实践就可能偏离正轨。由此观之, 有必要重新审视这一“讨厌因素”, 及时检查和纠正偏差。本文拟从实证证据切入, 厘清共同方法变异和共同方法偏差的关系, 梳理对共同方法变异威胁的回应和辩护, 在新视角下提出一种共同方法变异的风险评估方法, 最后提出理念和实务上的建议, 期望帮助研究者澄清模糊观念、树立无偏态度、改良处置策略。

2 貌合神离:共同方法变异与共同方法偏差之检测与辨析

共同方法变异和共同方法偏差相伴而生, 是一个问题的两个方面, 它们密不可分又若即若离, 不少学者视之为可互换的概念, 但它们有着清晰的界限, 从不同角度反映了构念测量中的“副产品”——方法效应。那么, 它们是否稳定而广泛地存在于研究中?很多学者进行了实证检测。以下首先简要回顾这些结果, 从中获得的启示将帮助我们把握二者的深层关系。

从历史发展脉络看, 学界对共同方法变异的兴趣自多特质-多方法(multitrait-multimethod, MTMM)矩阵问世以来就没有停止过。在MTMM模型和经典测量理论框架下, 构念的总变异被分解为真分数变异、方法变异和随机误差变异(Lindell & Whitney, 2001; Williams & Brown, 1994); 假定方法变异等同于共同方法变异, 通过相关特质-相关方法(correlated trait-correlated method, CTCM)模型估计方法变异占总变异的比例, 就成为共同方法变异的最优检测方式(叶日武, 林荣春, 2014)。Podsakoff等(2012)总结了1987~2010年间的5项研究, 发现方法变异在总变异中的比例为18%~32%, 特质变异的比例则在40%~48%之间, 也就是说, 方法变异在全部系统变异中的比例达到30%以上, 证明Doty和Glick (1998)的忧虑——共同方法变异已成为研究中无法回避的问题——不无道理。不过, 模型无法识别和不适当解的缺陷限制了MTMM分析结果的稳健性(Malhotra, Schaller, & Patil, 2017; Meade, Watson, & Kroustalis, 2007), 且新近研究发现方法变异仅占总变异的6.59%到16% (Malhotra, Kim, & Patil, 2006; Schaller, Patil, & Malhotra, 2015; 萧佳纯, 涂志贤, 2012), 与2000年以前的研究相比明显减少, 令人稍感宽慰。

由于人们更关心共同方法变异可观察到的影响, 作为其外部表征的共同方法偏差应运而生。与真实相关系数相比, 这种“偏差”可以是膨胀(inflation)或紧缩(deflation), 但学者对可能导致假阳性结果和I型错误的膨胀效应更加敏感(Fuller et al., 2016)。估计共同方法偏差需求得观测相关与真实相关的差值, 但由于真实相关不可知, 通常的做法是退而求其次, 通过类实验设计比较同一对构念在使用相同和不同方法测量时的相关性有何差异, 构念在方法特征上的相似程度决定着共同方法偏差的大小。关键方法特征及检测结果如下。

(1)数据来源。构念的测量可来自单一受测者或多种渠道(如多个评定者、客观记录)。一个明显的事实是, 对共同方法变异的批评绝大部分指向研究者最常使用的自我报告单一来源式横断调查研究(Brannick, Chan, Conway, Lance, & Spector, 2010; Chang, van Witteloostuijn, & Eden, 2010; Lai, Li, & Leung, 2013; Spector & Brannick, 2010)。不少学者相信, 自我报告数据带有大量同源偏差(common source bias), 得到的结果不可信, 有些审稿人甚至会不假思索地拒绝这类稿件(Brannick et al., 2010; Spector, 2006)。现有证据也表明, 单一受测者得到的相关系数的确偏高。同源偏差程度是构念间相关性的调节变量(陈春花, 苏涛, 王杏珊, 2016), Podsakoff等的2项元分析发现, 较之采用不同评定者, 单一受测者使相关系数发生了59.5%~304%的膨胀(Podsakoff, Whiting, Welsh, & Mai, 2013; Podsakoff et al., 2012), 个人或组织绩效与解释变量的关系也呈现出相似的趋势(Andersen, Heinesen, & Pedersen, 2016; Meier & O’Toole, 2013; 苏中兴, 段佳利, 2015)。同源偏差在主观性较强的感知类变量(如组织承诺、工作满意度)中更加严重(Favero & Bullock, 2015; Sharma, Yetton, & Crawford, 2009; Tehseen, Ramayah, & Sajilan, 2017)。

(2)测量时间。在同一时间测量的构念会带有系统性共变, 因为留存在短时记忆中的信息增大了一致性回答的概率, 导致相关性的膨胀(Podsakoff et al., 2003)。研究表明, 在不同时间点(间隔1天到2个月)测得的构念间的相关系数明显小于一次完成全部测量时的结果(Barraclough, af Wåhlberg, Freeman, Davey, & Watson, 2014; Johnson, Rosen, & Djurdjevic, 2011; Wingate, Sng, & Loprinzi, 2018)。

(3)问卷设计。主要涉及量表的格式(如Likert量表和语义区分量表)和选项(anchor)、题项的语义清晰度。采用选项内容(如同意式或频率式)和数量(如五级计分)均相同的Likert量表测量多个构念, 得到的相关系数会偏高(Podsakoff et al., 2013; Schwarz, Rizzuto, Carraher-Wolverton, Roldán, & Barrera-Barrera, 2017); 抽象、表意不清、模棱两可的题项会造成构念的指标负荷、合成信度和路径系数的膨胀(Schwarz et al., 2017; Schwarz, Schwarz, & Rizzuto, 2008)。

上述诸多实证证据有助于我们理解共同方法变异和共同方法偏差的联系和区别。从检测方法来看, 二者遵循了不同的技术路线:由变异分解反推共同方法变异, 由相关系数偏倚反推共同方法偏差。得到的结果不尽一致, 由于早期研究中较高的方法变异比例没有在新近研究中复现, 只能说共同方法变异确实存在, 但不见得是波及范围极广的“致命瘟疫”; 不过多数研究发现相似的方法特征会造成观测相关的虚高, 可见对共同方法偏差的担忧并非空穴来风。

从这两点看似矛盾的结论出发, 我们认为可对共同方法变异和共同方法偏差的关系做如下归结:第一, 共同方法变异是因, 共同方法偏差是果, 共同方法变异是共同方法偏差存在的必要条件。相同或相似的测量方法扩大了两个构念共享的系统变异, 导致相关系数的高估。第二, 二者的因果关系不是必然关系而是或然关系, 只能说当存在共同方法变异时, 出现共同方法偏差的概率增大, 但不具有确定性。例如在Doty和Glick (1998)的研究中, 虽然有83%的观测相关系数发生膨胀, 但一半以上都落在经方法因子校正后的95%置信区间中。他们由此认为, 共同方法变异在组织研究中普遍存在, 但共同方法偏差没有预想的那么严重, 应重点关注共同方法偏差的大小而不是共同方法变异是否存在; 如果共同方法变异不影响构念间实质关系的统计推断, 就无需过度担心。无独有偶, Fuller等(2016)的模拟研究也发现, 在常规信度水平下, 只有共同方法变异占据相当大比例(总变异的60%以上)时, 共同方法偏差才会出现, 否则观测相关系数与预设值差异不大。这就意味着共同方法变异不是共同方法偏差的充分条件。第三, 以共同方法偏差反推共同方法变异庶几可行, 但以共同方法变异预测共同方法偏差不一定稳妥, 即使检测出了较大的共同方法变异, 观测相关系数也不一定“同步”发生偏倚; 换言之, 尚未发现二者有稳定的强对应关系。一种可能是, 有的构念对共同方法变异有较强的容忍度, 有的则易受影响, 这两类构念分别需要不同量的共同方法变异来“触发”共同方法偏差(详见第4节)。

3 辩护陈词:对共同方法变异“脱敏”

由于大量研究一致检测出了显著的共同方法偏差, 越来越多的研究者相信它是严重危害研究效度的“大麻烦”; 自我报告研究更是深陷“信任危机”, 受到一些挑剔的期刊和审稿人的歧视, 成为学界对共同方法变异失衡态度的一个缩影, 这对曾极大推动心理学科学化进程的问卷法来说不啻为一种过度的苛责(Kline, Sulsky, & Rever- Moriyama, 2000)。同源偏差的“罪名”一旦“坐实”, 不但会动摇问卷法在社会科学研究方法体系中的地位, 还会对心理和管理领域大量依靠相关性研究建立起来的理论构成极大威胁。在批判浪潮中, 一些学者坚守立场, 做出了有力的辩护, 反对以偏概全、因噎废食的消极态度, 告诫研究者不必对共同方法变异过度敏感。

3.1 自我报告法不可替代

普遍的偏见和期刊的压力“倒逼”研究者摒弃用一张问卷获取所有数据的简单设计, 转而以外部数据、时间分离等变通方法规避批评, 虽然有益于研究者下决心改进研究设计, 但也不能一概将自我报告拒之门外。

第一, 很多构念的测量仅适合自我报告。心理和管理研究中经常遇到与认知、态度、情感、价值观、意愿等有关的自我参照(self-referential)变量, 它们更多指向受测者的内心感受而不是客观环境或实际行为, 只有借助个人内省和自我报告才能有效测量, 其他来源数据的准确性难以保证(Edwards, 2008; Podsakoff et al., 2013; 叶日武, 2015)。例如抑郁情绪往往是内隐而缺乏公开表露的, 只有本人能确切了解自己的情绪状态, 故多为自评, 相反如果让教师来评定学生的抑郁水平就可能出现较大偏差(George & Pandey, 2017)。

第二, 自我报告对探索性研究有重要价值。在提出和验证某一理论假说的过程中, 研究者往往先编制测量工具将构念操作化, 进而在理论指引下开展多变量相关研究, 此时采用自我报告法同时测量多个构念并考察其关系是合理的做法(Brannick et al., 2010; Reio, 2010), 可以高效而经济地识别与焦点构念有密切联系的前因和后果变量, 以此充实和完善理论; 但如果仅为减小共同方法偏差而盲目采用多来源数据, 一旦构念间的相关不显著, 理论的建构进程就会遭受挫折, 一个颇具现实解释力的新理论可能就此被搁置, 得不偿失。

“都市传说”的宣扬者Spector (2006)还辩解道, 如果自我报告都带有同源偏差, 应存在一个确保所有观测相关系数达到统计显著性的基线水平, 但实际情况是, 即使在大样本研究和具有理论关联的构念间, 不显著的相关仍十分常见, 这充分说明自我报告远不是获得显著相关性的保证。总之, 正如“不能把婴儿与洗澡水一起倒掉”, 不应在未经确证的情况下不加区分地拒绝自我报告研究, 更不可将其“妖魔化”。

3.2 独立数据来源不是“救命稻草”

独立数据来源指独立于受测者自我报告的外部数据来源, 可分为两类:一是为研究中的不同构念分配不同的评定者, 比较典型的是员工-主管配对和儿童-父母配对; 二是采用现成的档案记录(如考试成绩、缺勤次数), 即二手数据。作为自我报告的替代方法, 独立数据的引入从根本上消除了单一数据来源这个最大的困扰, 受到学者的普遍欢迎, 被认为是最直接、最彻底的解决方案(Chang et al., 2010; Favero & Bullock, 2015; Pace, 2010; Podsakoff et al., 2013)。然而, 独立数据来源真的是治愈“瘟疫”的一剂良药吗?也许不尽然, 因为来自外部评定者的数据的效度不总是令人满意。其一, 评定者如果对评定对象不够了解或掌握的信息较为片面, 评定结果可能脱离实际。他人评定和自我报告结果存在很大出入的情况并不鲜见, 而且不同主体的评价都带有实质性信息, 难以判定哪个更准确(Spector & Brannick, 2009)。Spector等的一项研究很能说明问题, 他们得到了一个“反常”的结果:与员工自评相比, 当员工的反生产行为和组织公民行为都由主管评定时, 其相关性反而更强。他们的解释是, 反生产行为通常比较隐秘, 主管难以发现, 组织公民行为则较为公开而易于识别, 两类行为信息准确性的不对等使主管无法像员工本人那样清晰地将二者区分开来, 造成相关系数的膨胀(Spector, Bauer, & Fox, 2010)。可见, 反生产行为这类透明度不高的行为不适合他评。其二, 他人评定看似消除了同源偏差, 但本质上仍是自我报告, 无法根除所有方法偏差, 特别是题项层面的某些偏差(Edwards, 2008; Meier & O’Toole, 2013)和同一组织成员的知觉趋同(苏中兴, 段佳利, 2015)。其三, 非同源数据的匹配过程大多伴有样本损耗, 还可能引入取样偏差, 例如员工只有在预期能够得到积极的绩效评价或与主管关系良好时, 才会把配套问卷交给主管填写(e.g., Carter, Mossholder, Field, & Armenakis, 2014)。此外, 模拟研究表明, 由于错失了一些实质性变异, 多方评定同样会导致结果偏倚, 并不比自我报告准确(Kammeyer-Mueller, Steel, & Rubenstein, 2010)。

二手数据虽比较客观, 不易掺杂自我报告的主观臆断因素, 但有些档案数据的采集过程不够公开透明, 如同一个“黑箱”, 缺乏研究者的主动控制; 数据可能因人为操纵(如有选择地记录、删除数据)而失真, 无法保证质量, 非常欢迎此类数据的审稿人一般也不会详加审查(George & Pandey, 2017)。显然, 这些都是潜在的“污染源”。

归结起来, 独立数据来源虽然可以消除大部分共同方法变异, 有其优势, 但绝非无懈可击, 如果评定者无法做出客观、准确的评定或二手数据失真, 同样会造成严重的偏差。独立数据来源无需过度吹捧, 也不能完全取代自我报告。

3.3 共同方法偏差的检测方法存在缺陷

如前所述, 检测共同方法偏差的基本思路是比较具备不同方法特征的两个构念的相关性, 设置了使用不同测量方法的多个“实验”组。这种方法表面上比较严谨, 得出的结论也有理有据, 但若细加思量, 又不难发现其中的漏洞。其一, 比较法的一个关键假设是, 采用多种方法得到的结果不存在共同方法偏差, 比单一方法的结果准确。在构念间的真实相关系数无从知晓的情况下, 多方法组的相关系数被默认为真值的近似值。然而, 这个“标尺”不一定靠得住, 因为多方法组的结果可能很不准确, 不能作为比较标准(Schaller et al., 2015)。Lance, Dawson, Birkelbach和Hoffman (2010)证明, 在MTMM模型中, 方法的相关性会影响构念的相关性, 只要方法效应显著(各指标在相应方法因子上的负荷不为0), 且方法之间为正相关, 观测相关系数就会发生膨胀。不幸的是, 元分析恰恰发现, 方法之间大多具有正相关, 因此用不同方法测量的构念间的观测相关系数一般也带有偏差; 严格来说, 只有在方法相关为0的前提下, 比较单一方法与多方法的结果才能评估共同方法偏差。如此严苛的条件在实际研究中几乎不可能满足, 与有偏结果的比较也就没多少意义了。其二, 虽然设置了多个“实验”组并操纵了个别方法特征, 但过程控制远不如真实验那样严格, 存在不少可能污染研究结果的无关变量, 比较突出的是测量情境和媒介。例如在Johnson等(2011)的研究中, 同一组受测者有的接受纸笔测验, 有的填写网络问卷, 其实这本身就属于不同的方法, 会影响受测者的反应方式(Weijters, Schillewaert, & Geuens, 2008), 研究者却未予控制。再者, 由于大多采用方便取样法, 难以随机分配被试, 且没有通过前测来比较各组在接受“实验处理”前是否处于同一基线水平(实际也不可行), 不易保证各组为同质组, 也就无法确定各组相关系数的差异有多少是由不同方法特征引起的。

一言以蔽之, 比较法的结果往往夸大了共同方法偏差, 说服力不高, 应慎重对待。

3.4 测量误差的抵消作用

虽然题项观测分数的变异包含3种成分, 但多数研究者只关心方法变异和特质变异的相对大小, 并习惯性地将测量误差当做可有可无的成分而不予分析。其实误差变异在总变异中的比例也相当可观(Lance et al., 2010; Podsakoff et al., 2012), 不应“选择性”忽视。Lance等(Brannick et al., 2010; Lance et al., 2010)认为, 测量误差是信度不足(unreliability, 指信度系数低于1的程度)的同义词, 与构念间的观测相关系数和共同方法偏差有直接关联。一般地, 假设两个构念X和Y采用了同一测量方法, 其相关系数的观测值rXY可表示为特质效应和方法效应之和:

其中, ${{\text{ }\!\!\lambda\!\!\text{ }}_{{{T}_{X}}}}$和${{\text{ }\!\!\lambda\!\!\text{ }}_{{{T}_{Y}}}}$分别代表X和Y的信度系数, ${{\text{ }\!\!\rho\!\!\text{ }}_{{{T}_{X}}{{T}_{Y}}}}$代表X和Y的真实相关系数, ${{\text{ }\!\!\lambda\!\!\text{ }}_{{{M}_{X}}}}$和${{\text{ }\!\!\lambda\!\!\text{ }}_{{{M}_{Y}}}}$分别代表单一测量方法M对X和Y的效应。从中可以看出, X和Y的观测相关因方法效应项${{\text{ }\!\!\lambda\!\!\text{ }}_{{{M}_{X}}}}{{\text{ }\!\!\lambda\!\!\text{ }}_{{{M}_{Y}}}}$发生膨胀, 导致共同方法偏差; 同时, 由于X和Y信度系数的乘积${{\text{ }\!\!\lambda\!\!\text{ }}_{{{T}_{X}}}}{{\text{ }\!\!\lambda\!\!\text{ }}_{{{T}_{Y}}}}$远小于1 (如在0.8的常规信度水平下, 该项等于0.64), 相关系数会因此发生缩减(attenuation)。两项相加后, 观测相关系数的净效应有三种情况:a.高于真值(膨胀大于缩减时); b.低于真值(膨胀小于缩减时); c.等于真值(膨胀等于缩减时) (Conway & Lance, 2010)。这提示, 测量误差的“中和”作用有望使观测相关不过度偏离真值, 从而将共同方法偏差控制在较低程度。

Lance等(2010)对18个MTMM矩阵的再分析为此提供了佐证。采用相同方法测量的两个构念的平均观测相关系数为0.340, 通过(1)式换算得到的相关系数为0.332, 二者极为接近; 加入方法因子后, 特质因子的平均相关系数(真实相关系数${{\text{ }\!\!\rho\!\!\text{ }}_{{{T}_{X}}{{T}_{Y}}}}$的无偏估计值)为0.371, 与前两个值的差异也不太大。他们由此得出结论(p.444):“ ‘共同方法效应使单一方法得到的相关系数膨胀’这一‘都市传说’有几分道理, 但相关系数大于其真值则是一个谣言, 这是由于测量误差具有削减效应。”在另一项模拟研究中, Fuller等(2016)操纵了共同方法变异比例、信度、真实相关系数等参数, 发现在信度略低于常规水平(0.77~0.80)时, 共同方法变异会导致相关系数的紧缩; 相反, 在信度极高(0.97~0.99)时, 共同方法变异会导致相关系数的膨胀。这很好地支持了Lance等的观点:共同方法变异虽然存在, 但能否引起显著的共同方法偏差部分取决于测量误差的削减作用; 在特定情况下(方法的膨胀效应恰好被测量误差的削减效应完全抵消), 由单一方法求得的相关系数能够准确地反映构念间的真实关系。

这一论点是对“致命瘟疫”说的有力回击, 有较扎实的理论和实证依据, 但Lance本人也承认, 它还没有得到学界的广泛认同。且不论其他问题, 这种解释首先与人们的常识相悖:较高的信度本应是研究力图达到的理想状态, 却同时削弱了测量误差的抵消作用, 助长了相关系数的膨胀; 换言之, 信度越高, 共同方法偏差反而越大, 令人困惑。学者还提出了其他异议。Favero和Bullock (2015)认为, Lance等的解释不适用于构念间的真实相关为0的情况, 因为此时观测相关系数不可能再被削减, 只会因共同方法变异而膨胀, 一旦其绝对值显著大于0, 就出现了假阳性结果, 这种I型错误是研究者极力规避的; 至于真实相关和观测相关都显著不为0时, 削减效应和膨胀效应的相对大小至多会改变相关系数的估计值而不太可能影响显著性(使原本显著的相关变为不显著), 不是太要紧。Meier和O’Toole (2013)提醒, 即使测量误差有抵消膨胀效应的潜力, 也不意味着可以无视共同方法偏差, 因为不同学科、不同研究乃至不同构念间的共同方法变异量有很大差异, 在共同方法变异风险较高的研究中, 抵消效果可能不理想, 无法完全排除共同方法偏差。诚如此言, 不是所有研究都能得到Lance等那样完美和巧合的结果, 膨胀量和削减量恰好相等也许只是个小概率事件, 不太具有普遍性。尽管如此, Lance等的初步探索使我们领会了信度和测量误差的另一层意蕴, 很有启示性。

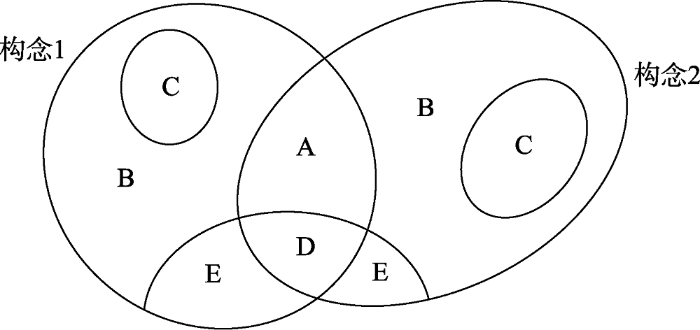

3.5 非共同方法变异与共同方法变异的消长

Conway和Lance (2010)将“他评优于自评”列为审稿人对共同方法偏差的三大误解之一, 因为来自不同评定者(推而广之, 其他不同方法特征)的评分会产生非共享方法效应或非共享无关变异(unshared irrelevant variance), 造成构念相关性的缩减(Brannick et al., 2010)。“非共享方法效应”的提出隐含了一种视角的转换, 即在关注测量方法的共同点或相似性之余, 也应留意方法之间的差异性, 因为这是共同方法变异的潜在制衡因子。受此启发, Spector等(Spector, Rosen, Richardson, Williams, & Johnson, in press)对方法变异做出了更全面的界定, 认为方法变异是作用于被测变量的外生的、意料之外的(unintended)系统性影响, 其中一部分为多个变量所共享, 即共同方法变异; 另一部分单独影响个别变量, 互不重叠, 称为非共同方法变异(uncommon method variance)。共同方法变异和非共同方法变异互补, 共同构成总的方法变异(各种变异成分的关系见图1), 它们相辅相成、密不可分, 又相互制约、此消彼长; 不论一项研究中的共同方法变异是否显著、量有多大, 必然存在一定量的非共同方法变异, 因为各构念的测量方法或多或少有一些差异。从另一个角度看, 不同方法间的相关性越高, 共同方法变异越大; 相关性越低, 非共同方法变异越大。

这样, 题项观测分数的变异可分解为

其中VO是总变异, Vc是纯粹由构念本身引起的变异, ${{V}_{{{M}_{i}}}}$是各种方法特征Mi引起的方法变异, VE是误差变异。$\mathop{\sum }^{}{{V}_{{{M}_{i}}}}$就等于共同方法变异和非共同方法变异之和。

非共同方法变异属于系统误差, 与测量误差和信度不足不是一回事, 但有类似的功能。单独来看, 它会减弱构念的相关性, 降低效果量, 扭曲复杂统计技术的估计结果, 导致II型错误。实际研究中两类方法变异并存, 情况会复杂一些。两个构念X和Y的观测相关系数rXY在数量上等于

先看等号右边的分子。不考虑测量误差, 由于X和Y的系统变异均包含构念变异和方法变异两项, 则其协方差等于四部分之和

其中下标C代表构念, 下标M代表方法。通常默认构念与方法之间无交互效应(加法效应模型) (Doty & Glick, 1998), 即(4)式等号右边的第二项和第三项等于0; 第四项表示的就是共同方法变异, 在X和Y采用同一测量方法时显著大于0, 从而总体协方差增大, 造成观测相关系数的膨胀; 如果测量方法不同, 则第四项等于02(2 Spector等的这一论断略显轻率, 因为Lance等(2010)的元分析表明不同方法间大多存在正相关, 其协方差一般不为0。), X和Y的协方差不会增大, 但非共同方法变异的存在将致使X和Y自身的系统变异增大, 从而(3)式等号右边的分母增大, 导致观测相关系数的缩减。可见, 方法变异对观测相关系数的净效应取决于两类方法变异的相对大小以及(3)式中分子和分母的相对变动程度。

由以上分析可以看出, 非共同方法变异有两方面的意义:其一, 如果两个构念的测量方法相同, 它和测量误差的双重削减作用将抑制共同方法偏差; 其二, 如果测量方法不同, 则非共同方法变异数量较大, 使构念间的实质性共变(图1中的A区域)在总变异中的比例减小, 造成相关系数的低估。Spector等认为, 不同的数据来源尤其容易引入非共同方法变异, 这就可以解释为何主管评定的团队绩效和员工评定的领导风格的相关系数可能低于真实值, 成为“采用多种测量方法得到的相关系数是真实相关系数的无偏估计值”的又一反驳论点。

图1

图1

构念总变异的分解

注:A-实质性共变, B-独特无关变异, C-测量误差变异, D-共同方法变异, E-非共同方法变异; D和E之和为方法变异, A和D之和决定了两个构念的观测相关系数大小

Spector等在共同方法变异之外提出具有对立性质的非共同方法变异, 颇有针锋相对的意味。虽然这一学说还是尝试性的, 尚缺乏实证证据, 但把人们对方法变异的认识推进了一步, 有助于厘清方法变异与共同方法变异的关系, 突破将二者等同起来的简单化理解。非共同方法变异与Lance等的测量误差抵消说相得益彰, 这两种观点都能较好地解释共同方法偏差何时表现为膨胀、何时表现为紧缩, 对“自我报告有严重的共同方法偏差”和“多方评定不存在共同方法偏差”的惯常思维发起了挑战, 是值得肯定的有益探索。

4 方法不代表一切:以测量为中心的新视角

“批判派”和“辩护派”你来我往的交锋使我们一时难以对共同方法变异的威胁下一个定论。或许, 这样一个普适的定论原本就不存在, 只有更加精细地看待共同方法变异, 才能找到正确的应对途径。研究者普遍带有这样的迷思:想当然地将共同方法变异与某种测量方法“挂钩”, 认为只要几个构念都采用了这种方法, 就免不了受到污染; 或者说, 共同方法变异的唯一诱发因素是方法, 与被测构念无关。在此驱动下, 面对一篇完全采用自我报告法的论文, 人们往往会揪住共同方法变异问题不放, 却对其中的变量特征和自我报告的适当性失之详查。

这一观念的偏颇之处在于, 只看到方法在共同方法变异形成中的作用, 割裂了方法与构念的联系。Spector (2006)强调, 认为用某一方法测量的所有构念都自动地带有一些普遍性的共享变异, 是一种夸大和过度简单化的理解:“我们需要对共同方法变异进行更加细致的思考, 作者和审稿人都不应条件反射式地批判共同方法变异或单一方法偏差。应当摒弃(retire)共同方法变异这个术语及其衍生物, 转而思考特定的偏差和变量关系可能的替代性解释。” (p.231)“摒弃”不是拒绝承认共同方法变异的存在, 而是说不应夸大其在不同构念组合中的普遍性。根据前述实证结果, 共同方法变异和共同方法偏差的大小在不同学科和不同构念组合中有很大差异, 这充分说明, 共同方法变异不能完全归咎于某种方法, 而是测量方法和被测构念交互作用的产物, 也可以说是方法和构念的函数(Williams, Hartman, & Cavazotte, 2010)。共同方法变异不是出现在方法水平, 而是出现在构念水平, 构念的性质不同, 采用同一方法测量的不同构念组合的共同方法变异风险也不同。例如, 自我报告可能会扭曲两个感知类变量的关系, 却未必使一个事实类变量(如年龄等人口统计学变量)和一个感知类变量的关系发生偏倚, 这是因为事实信息较少因测量方法产生偏差。这就是以测量为中心(measure-centric)的视角, 它假设对每一构念的操作化(方法与特质的结合)都带有一些独特的偏差3(根据Spector的观点, 这里的“偏差”是广义上的, 不仅包括方法偏差, 还涉及其他所有可能影响构念间关系的偏差, 如第三变量(third variables)。), 如果多个构念的操作化各自携带的偏差有交叉重叠之处, 才有可能产生共同方法偏差(Brannick et al., 2010; Spector, 2006; Spector et al., in press)。

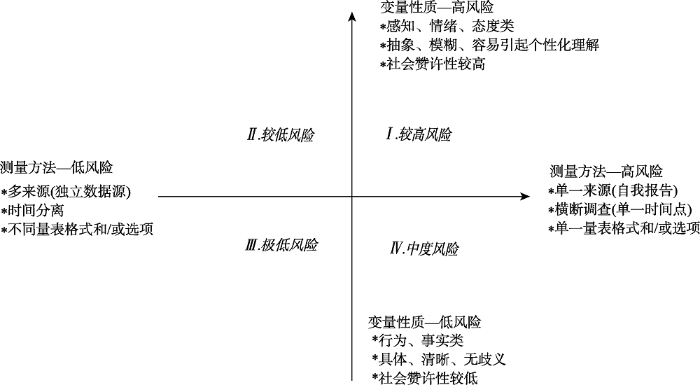

以测量为中心的视角对研究者和审稿人的启示是, 不宜大而化之地将共同方法变异作为研究中的普遍问题, 应具体分析每一对变量的共同方法变异风险及其来源, 识别最有可能受到污染的变量组合。遵循这一思路, 我们认为, 可以将主要的共同方法变异风险源划分为变量和方法两个维度, 其中方法维度的关键要素包括是否取自单一来源、是否在同一时间测量、是否使用了相同的量表格式和选项等, 变量维度的关键要素包括是否属于感知类变量、抽象性、社会赞许性等。以方法引起的风险为横轴, 以变量引起的风险为纵轴, 可形成“共同方法变异风险评估坐标系”, 如图2。

图2

对于一项研究中所有具有预测与被预测关系的变量组合, 都可以参照该坐标系, 通过评估两个变量在方法和变量维度相应各关键要素上的相似性来综合研判共同方法变异风险。为提高评估结果的精确性, 可以根据现有研究结果和个人经验, 对每一要素分别评分, 考虑到各要素的影响力(权重)不同, 评分范围也各不相同, 详见表1 (得分越高表示风险越大)。分别计算两个维度的总分, 确定该点在坐标系中的位置, 即可初步了解该变量组合受共同方法变异的影响程度; 以此类推, 还可计算所有焦点变量组合得分的平均分, 评估整项研究的共同方法变异风险。

表1 共同方法变异风险评估计分规则

| 风险源 | 评分范围 | 说明 |

|---|---|---|

| 方法维度 | ||

| 数据来源 | -4~4 | 完全自我报告计4分, 数据来源不同计-4分 |

| 测量时间 | -3~3 | 一次性完成计3分; 时间间隔越长, 评分越低, 如间隔2天可计-1分, 间隔1周可计-2分 |

| 量表格式和选项 | -2~2 | 两个变量采用完全相同的格式和选项, 计2分; 差异越大, 评分越低 |

| 变量维度 | ||

| 是否属于感知类 | -2~2 | 两个变量均为感知类变量, 计2分; 至少一个不属于感知类变量, 计-2分 |

| 抽象性 | -2~2 | 两个变量都非常抽象或模糊, 计2分; 至少一个比较具体, 计-2分 |

| 社会赞许性 | -2~2 | 两个变量都有明显的社会赞许性, 计2分; 至少一个社会赞许性较低, 计-2分 |

该方法为细致评估特定研究中的共同方法变异提供了一种思路, 既有助于研究者预判共同方法变异来源并采取针对性的控制手段, 也有助于审稿人有理据地做出评价, 而不是泛泛地批评“该研究有严重的共同方法偏差问题”。当然, 这套评估体系还非常粗糙, 纳入的方法要素、权重等有很多可商榷之处; 准确的评分有赖于对共同方法变异的深刻理解和丰富实践经验, 不可避免带有主观性。我们希望学界同仁能够以此为起点, 通过系统深入的研究提出真知灼见, 使其更趋完善、更具可操作性。

5 总结与建议

学界对共同方法变异问题屡攻不克。作为Journal of Applied Psychology自1990年以来发表的影响最为深远的方法学文献之一(Cortina, Aguinis, & Deshon, 2017), Podsakoff等(2003)的里程碑式综述4(4 2018年11月8日谷歌学术的检索数据显示, 该文被引量已超过3.2万次。)促使众多学者重视共同方法变异并全力探寻解决之策, 但“攻”“守”双方势均力敌的论战使“致命瘟疫”和“都市传说”之争越发扑朔迷离。时至今日, 未解的谜团也许远远多于已解决的问题, 更多时候“我们未必真正知道我们认为自己知道的” (Spector et al., 2010)。虽然在国内, 共同方法变异受到“围剿”已成风气, 但我们认为, 在决定性的、一锤定音的证据出现之前, 应采取谨慎和均衡的态度, 既不能反应过敏, 也不能置之不理。着眼于稳妥处理共同方法变异问题以提高研究质量, 我们提出以下粗浅建议, 供同行讨论。

第一, 以包容和开放的心态面对共同方法变异。其实, 方法变异本是构念的固有属性, 两个构念的测量方法几乎总有相似的特征, 即使采用程序和统计控制手段也很难将共同方法变异完全剔除; 况且有些共同方法变异还触及构念的实质成分, 不都是有害的(Lance, Baranik, Lau, & Scharlau, 2009)。因此, 可以说共同方法变异的存在是天然合理、不可避免的, 不妨以宽容之心接纳它, 没必要处心积虑地试图消灭它。我们希望学术共同体特别是审稿人形成一种共识, 容许共同方法变异带来的缺憾, 结合实际多提有助于改进研究设计的建设性意见, 而不是挥舞着共同方法变异这张“王牌”一味挑刺。

第二, 纠正对自我报告的偏见。在普遍的“效度焦虑”中, 研究者尤其需要实事求是地评估自我报告的短长, 须知不同的构念组合具有不同的共同方法变异“易感性”, 不能不由分说地把一切责任都推给自我报告, 更不应怀着“自我报告一定受到了共同方法变异的污染”这类先入为主的成见而将其全盘否定。当然, 在条件许可时, 从不同来源获取数据还是值得推荐(例如员工的工作绩效最好由主管来评定), 但这是一剂“猛药”, 需思考特定构念是否适合他评、他人能否做出准确的评定以及低估相关性的可能, 在各类风险间做出权衡。在很多情况下, 自我报告仍是首选方法, 如果构念关联性的理论基础坚实、观测相关系数较大(如大于0.5)、构念含义较为具体或多涉及可观察的行为、数据质量较高(信效度高), 则共同方法变异的威胁相对较小(Batista-Foguet, Revilla, Saris, Boyatzis, & Serlavós, 2014; Rindfleisch, Malter, Ganesan, & Moorman, 2008), 至少不太容易出现研究者最担心的假阳性; 但如果研究中的自我参照式感知构念较多、相关系数刚刚达到显著水平、题项语义模糊抽象、施测过程中出现干扰因素或受测者不够配合, 就面临着较大的共同方法变异风险。需要解释的是, 与偏高的相关系数相比, 更值得警惕的是绝对值较低或恰好达到p < 0.05的“门槛”的相关系数, 因为如果这是由共同方法变异引起的, 就意味着两个原本没有关联的构念具有了伪相关性, 会对后续研究产生强烈误导。总而言之, 自我报告仍有很大价值, 绝非一无是处; 采用多源数据时要评估适合性, 还要承担引入其他偏差之风险, 两害相权取其轻。

第三, 改进补救策略。为增强研究结果的稳健性, 进行适当的控制或补救还是有必要的。最根本的是做好研究设计, 预先对变量和拟采用的测量方法进行整体分析, 识别共同方法变异来源, 从资料收集的分离策略和测量工具的改进两端着手(吕宛蓁, 萧嘉惠, 许振明, 曹校章, 王学中, 2012; 彭台光等, 2006), 制订系统的解决方案, 综合运用时间分离、变换量表选项、使用反向计分题、优化题项文字表述、删除不同构念中语义相近的题项、争取受测者的配合等措施, 减少一致性、偏差性、敷衍性的回答。需要注意, 策略的选用应以排除混淆变量的影响以巩固因果关系为基点, 依研究的具体条件而定, 不必仅仅为了让审稿人满意而大费周章地将研究设计复杂化。例如, 虽然时间分离常被推荐为有效的方法(Craighead, Ketchen, Dunn, & Hult, 2011; MacKenzie & Podsakoff, 2012), 但带有中介变量的研究是否需要此类纵向数据, 取决于所要研究的问题和变量(温忠麟, 2017), 以样本流失为代价去控制一个可能“不存在的东西” (Brannick et al., 2010)未必明智。在变量越来越多、模型越来越复杂的趋势下, 为所有变量指定不同的评定者或进行彻底的时间分离是不现实的5(5 以领导有效性研究为例, 常见做法是由上司评定结果变量(如员工绩效), 领导风格和中介变量由员工评定, 这样同源偏差风险依旧存在(自变量—中介变量间), 除非每个变量都来自不同的评定者; “彻底的时间分离”如Johnson等(2011) Study 2中的Sample 4 (p.753)及郑晓明和刘鑫(2016), 在实际研究中不易实现。), 建议研究者抓住重点, 根据我们提出的风险评估方法找出共同方法变异顾虑最大的变量组合(如两个抽象的感知类变量), 将预防措施用在这些紧要之处。另一方面, 据我们观察, 国内研究者大都擅长用统计技术进行事后检测和控制, 对研究设计和实施过程中的控制方法却着墨不多, 显示出重统计补救、轻事先预防的不良倾向。遗憾的是, 多数统计技术不是效力不高就是有明显弊端, 没有一种是包治百病的“万灵药”, 与其说能“亡羊补牢”, 不如说只是提供了一种心理安慰或“虚假的安全感” (Brannick et al., 2010), 应慎用。例如, 研究者最熟知、使用也最频繁的Harman单因子检验6(6 本文第一作者对《心理学报》《心理科学》《心理发展与教育》2017年发表的128篇主要采用问卷法的论文进行了粗略统计, 发现其中96篇使用了Harman单因子检验, 占四分之三。相关评论详见朱海腾(2018-06-19)。)不能对共同方法偏差进行任何控制和校正, 至多只能粗略地检测共同方法变异, 而且灵敏性极差(e.g., Chang et al., 2010; Malhotra et al., 2017; Tehseen et al., 2017; 刘洋, 谢丽, 2017; 朱海腾, 2018-06-19), 建议摒弃这种方法; 最好直接测量并控制已知的变异来源(如社会赞许性、反应偏向), 但这远非万全之策。应谨记“一个周全的研究设计胜过十个精巧的补救措施” (彭台光等, 2006, p.91), 以改进研究设计为本, 减少对统计技术的依赖。

第四, 加强对共同方法变异的基础研究。国内研究的匮乏很大程度上限制了学者对这一问题的理解, 而国外的研究成果较为丰富, 大量集中在组织和管理领域, 建议多加关注。其实目前还有很多悬而未决的谜题, 例如:其一, 不少量表含有反向计分题, 有学者建议在量表验证阶段采用双因子模型分离由正向/反向表述带来的方法变异以提高构念效度(e.g., Paiva-Salisbury, Gill, & Stickle, 2016; 顾红磊, 温忠麟, 2017; 张春雨, 韦嘉, 赵清清, 张进辅, 2015), 但反向计分题在施测时常因作答者没能正确理解而效果不佳, 如何合理安排反向计分题的数量和位置, 使之既能削减共同方法偏差又不损害效度?其二, 当前对共同方法变异的研究多限于双变量简单相关, 其对涉及中介效应、调节效应的多变量研究(Siemsen, Roth, & Oliveira, 2010; Spector et al., in press)和涉及嵌套数据的多层次研究(Lai et al., 2013)有何影响?这无疑更引人瞩目。其三, 在统计技术上, 国外探讨较多的基于验证性因子分析的标签变量法(Williams et al., 2010)和新近提出的混合方法变量模型(hybrid method variables model) (Williams & McGonagle, 2016), 国内学者基本还没有注意到, 它们的“疗效”是否令人满意?能否开发出更加简便管用的新技术?

共同方法变异可以说是一个游荡在社会科学研究上空的幽灵, 就算竭尽所能, 也很难将其彻底驱除。但我们大可不必为此而灰心, 只需正视它并尽力而为。正如Kammeyer-Mueller等(2010, p.317)所言:“尽管我们热切期盼能够减小误差的更简便的方法, 并对传统的‘硬’科学中那些精确的测量工具垂涎不已, 但昭示着我们的学科走向成熟的标志是, 接纳测量中的缺陷, 并采取必要的措施来克服这些障碍。”

参考文献

中国情境下变革型领导与绩效关系的Meta分析

DOI:10.3969/j.issn.1672-884x.2016.08.007

URL

[本文引用: 1]

基于包含426个效应值、26 092个独立样本,总样本量达1 01 358个的117篇独立实证文献进行了Meta分析。研究结果表明,相对于西方情境,变革型领导在中国情境下能带来更高的绩效;中国情境下变革型领导对员工个体绩效(任务、关系、创新绩效)、团队绩效、组织绩效均有显著的促进作用,且促进作用均大于西方情境,但对不同类型个体绩效促进作用的强弱排序与西方情境不一致;情境因素(组织属性、领导层次、地区属性)和测量因素(数据属性、测量工具、同源偏差程度)能够显著调节变革型领导与个体绩效的关系,但调节强度与西方情境也有所不同。研究结果可让中国情境下变革型领导与绩效的相关性研究获得阶段性定论。

多维测验分数的报告与解释: 基于双因子模型的视角

在心理、行为、管理和教育等社科领域,经常使用多维测验。本文评介并比较了各种多维测验的测量模型;总结了基于双因子模型计算得到的统计指标;根据不同研究目的,提出了两个兼顾简洁性和精确性的多维测验分析流程。作为例子,在马基雅维利主义人格量表的研究中,通过双因子模型分析了如何报告、解释多维测验分数以及如何利用多维建模进行后续分析。

中国管理研究中问卷调查法的取样与测量合适性: 评估与建议

DOI:10.14071/j.1008-8105(2017)02-0024-08

URL

[本文引用: 1]

问卷法作为中国管理研究中最普遍的研究方法近来受到较多质疑。问卷调研法的“严谨性”本身没有问题,而是由于部分学者在使用这一方法过程中的不严谨性(特别是在取样和测量方面),导致学者对此方法产生了一定的误解。基于此,针对近十年发表在《管理世界》上的137篇采用问卷调研法的演绎性研究,对其取样和测量合适性进行评估,提出了八个常见的问题,并以一篇范文为例,提出了对应的建议,以期为采用问卷调研法的管理研究提供一定的借鉴。

同源主观数据是否膨胀了变量间的相关性——以战略人力资源管理研究为例

DOI:10.14086/j.cnki.wujss.2015.06.010

URL

[本文引用: 2]

研究变量之间的相关性是现代管理学实证研究的基本范式。然而,变量之间统计上的相关性极易受到变量的测量方法和数据类型的影响。特别是,基于同源数据的实证研究会夸大变量间的相关程度甚至带来虚假相关,这已经给管理学实证研究带来了严峻挑战。不幸的是,目前仍有大量的实证研究都在使用同源主观数据。本文以人力资源管理和企业绩效的关系为例,检验了在同一样本中,对变量的不同测量和数据类型如何导致变量间相关性结论的差异。结果显示,当自变量和因变量是"同源主观数据"时,自变量和因变量之间的相关程度最高;当自变量和因变量是"非同源主观数据"时,相关程度有所下降;当自变量采用主观数据,而因变量采用客观数据时,这种相关没有达到显著性。本文的研究例子表明,在管理学实证研究中一定要谨慎使用同源的主观测量数据。

实证研究中的因果推理与分析

心理学期刊中的实证研究论文,很多时候都在检验变量之间的因果关系,但学界对因果研究存在一些不同的看法。本文试图回答下面问题:(1)实验中不能操纵的变量是否可以作为原因?(2)非实验研究能不能检验因果关系?(3)因果分析(尤其是中介分析)是否一定要使用追踪数据?追踪设计的主要目的是什么?通过因果要素和因果推理逻辑的辨析,对前面两个问题都给出了肯定的回答,并讨论了判明变量先后顺序、统计控制无关变量的方法;为了回答问题3,厘清了追踪设计在因果分析中的作用:区分变量的先后顺序、有效获得历时性的影响结果,但即时性的因果影响采用追踪设计可能是不合适的。

共同方法变异的影响及其统计控制途径的模型分析

DOI:10.3724/SP.J.1042.2012.00757

URL

[本文引用: 1]

Common Method Variance (CMV) refers to the overlap in variance between two variables because of the type of measurement instrument used rather than representing a true relationship between the underlying constructs. Researchers should give careful consideration to CMV although it may not surely bias the conclusions about the relationships between measures. CMV effect is often created by using the same method — especially a survey — to measure each variable. Procedural design and statistical control solutions are provided to minimize its likelihood in studies. A statistical control technique is a good solution if it can separate construct varience, method varience and error, and distinguish method bias at the item level from method bias at the construct level, and takes account of Method×Trait interactions. Thus, method-factor approaches are better than partial correlation approaches. It’s very important to understand the model of every method-factor approache for selecting statistical remedies correctly for different types of research settings. Etimating evaluate the effect of CMV within specific research domains and the effect of CMV on empirical findings within a theoretical domain should be concerned for further research.

方法影响结果? 方法变异的本质、影响及控制

DOI:10.3969/j.issn.1003-5184.2013.03.001

URL

[本文引用: 1]

方法变异是由研究方法导致的系统误差。方法变异既可能产生结构层面的影响,也可能产生题目层面的影响;既可能影响单个结构的测量,也可能影响用相同方法研究的多个结构之间的关系,还可能影响用相同方法研究的多个研究之间的关系,进而影响到相关领域的理论建构。在控制方法变异时,可采取过程控制法和统计控制法,最好先用过程控制法。

正负性表述的方法效应: 以核心自我评价量表的结构为例

DOI:10.3969/j.issn.1003-5184.2015.01.015

URL

[本文引用: 1]

为了平衡反应偏向,负性表述题目被引入量表,但这可能增加新的方法变异.本研究以核心自我评价量表(CSES)为例,检验正负性表述方法效应对其结构的影响.研究表明,CSES在结构效度和效标关联效度上均受到了表述方法的潜在影响.本研究支持了控制方法效应后的CSES单因子结构.本研究不仅为一直无法统一的CSES中文版提供了新的解释,也为自陈式心理测量工具在设置负性题目方面提供了新的思考.

互动公平对员工幸福感的影响: 心理授权的中介作用与权力距离的调节作用

DOI:10.3724/SP.J.1041.2016.00693

URL

[本文引用: 1]

近些年,由于积极心理学的兴起,员工幸福感的研究得到了广泛的关注。本论文从互动公平这一特定的组织公平概念出发,以公平理论为主体,并结合自我决定理论,从心理授权的视角既分析了互动公平影响员工幸福感的内在机制,又探讨了权力距离对整个影响机制的调节作用。通过对国内一家制造业企业的199名员工多时点匹配问卷的调查,结果表明:互动公平与员工幸福感之间呈现正相关关系;心理授权中介了互动公平对员工幸福感的影响作用;权力距离不仅负向调节了互动公平与心理授权之间的关系,而且还负向调节了互动公平—心理授权—员工幸福感这一中介机制。本研究的发现有利于充分了解互动公平影响员工幸福感的内在机制和边界条件,同时能为管理实践提供更好的指导,有效地提高员工幸福感。

共同方法偏差的统计检验与控制方法

DOI:10.3969/j.issn.1671-3710.2004.06.018

URL

[本文引用: 1]

The problem of common method biases has being given more and more attention in the field of psychology, but there is little research about it in China, and the effects of common method bias are not well controlled. Generally, there are two ways of controlling common method biases, procedural remedies and statistical remedies. In this paper, statistical remedies for common method biases are provided, such as factor analysis, partial correlation, latent method factor, structural equation model, and their advantages and disadvantages are analyzed separately. Finally, suggestions of how to choose these remedies are given.

Individual performance: From common source bias to institutionalized assessment

DOI:10.1093/jopart/muv010

URL

[本文引用: 1]

Performance is perhaps the most central concept in public administration research, and this article discusses theoretically and investigates empirically how we can obtain more consistent performance measures. Theoretically, we combine existing arguments in public administration with institutional theory and the sociology of professions. Empirically, we ask whether different measures of individual performance produce different results. The investigated performance measures vary with regard to risk of common data source bias, standardization of assessment criteria, and external verification of the assessment. Our investigated explanatory variables are intrinsic motivation, public service motivation, and job satisfaction. Combining survey and administrative data for 747 lower secondary school teachers (teaching 5,679 students in 85 schools), we analyze 4 different measures of the same performance dimension for the same teachers: the teachers’ self-reported contributions to students’ academic skills, the students’ marks for the year’s work given by the teacher, marks in oral exams with one external examiner and the teacher, and marks in written exams with at least one external examiner. The associations are systematically stronger when the performance measure comes from the same data source as the explanatory variables, but when separate data sources are used and the measurement scale is institutionalized, the level of external verification does not matter much. Based on institutional theory and the sociology of professions, we develop a theoretical argument that can explain this.

Real or imagined? A study exploring the existence of common method variance effects in road safety research.

Reassessing the effect of survey characteristics on common method bias in emotional and social intelligence competencies assessment

DOI:10.1080/10705511.2014.934767

URL

[本文引用: 1]

Since the idea of method variance was inspired by D. T. Campbell and Fiske in 1959, many papers have demonstrated an ongoing debate about both its nature and impact. Often, method variance entails an upward bias in correlations among observed variables—common method bias. This article reports a split-ballot multitrait–multimethod experimental design for estimating 2 opposite biases: the upward biasing method variance from the reaction to the length of the response scale and the position of the survey items in the questionnaire and the downward biasing effect of poor data quality. The data are derived from self-reported behavior related to emotional and social competencies. This article illustrates a methodology to estimate common method bias and its components: common method scale variance, common method occasion variance, and the attenuation effect due to measurement errors. The results show that common method variance has a much smaller impact than random and systematic measurement errors. The results also corroborate previous findings: the greater reliability of longer scales and the lower reliability of items placed toward the end of the survey.

What is method variance and how can we cope with it? A panel discussion

DOI:10.1177/1094428109360993

URL

[本文引用: 8]

A panel of experts describes the nature of, and remedies for, method variance. In an attempt to help the reader understand the nature of method variance, the authors describe their experiences with method variance both on the giving and the receiving ends of the editorial review process, as well as their interpretation of other reviewers鈥 comments. They then describe methods of data analysis and research design, which have been used for detecting and eliminating the effects of method variance. Most methods have some utility, but none prevent the researcher from making faulty inferences. The authors conclude with suggestions for resolving disputes about method variance.

Transformational leadership, interactional justice, and organizational citizenship behavior: The effects of racial and gender dissimilarity between supervisors and subordinates

From the editors: Common method variance in international business research

DOI:10.1057/jibs.2009.88

URL

[本文引用: 3]

"JIBS" receives many manuscripts that report findings from analyzing survey data based on same-respondent replies. This can be problematic since same-respondent studies can suffer from common method variance (CMV). Currently, authors who submit manuscripts to "JIBS" that appear to suffer from CMV are asked to perform validity checks and resubmit their manuscripts. This letter from the Editors is designed to outline the current state of best parctice for handling CMV in international business research.

What reviewers should expect from authors regarding common method bias in organizational research

Twilight of dawn or of evening? A century of research methods in the Journal of Applied Psychology

Addressing common method variance: Guidelines for survey research on information technology, operations, and supply chain management

DOI:10.1109/TEM.2011.2136437

URL

[本文引用: 1]

Common method variance (CMV) is the amount of spurious correlation between variables that is created by using the same method-often a survey-to measure each variable. CMV may lead to erroneous conclusions about relationships between variables by inflating or deflating findings. We analyzed recent survey research in IEEE Transactions on Engineering Management, Journal of Operations Management, and Production and Operations Management to assess if and how scholars address CMV. We found that two-thirds of the relevant articles published between 2001 and 2009 did not formally address CMV, and many that did address CMV relied on relatively weak remedies. These findings have troubling implications for efforts to build knowledge within information technology, operations and supply chain management research. In an effort to strengthen future research designs, we provide recommendations to help scholars to better address CMV. Given the potentially severe effects of CMV, authors should apply the recommended CMV remedies within their survey-based studies, and reviewers should hold authors accountable when they fail to do so.

Common methods bias: Does common methods variance really bias results?

To prosper, organizational psychology should…overcome methodological barriers to progress

DOI:10.1002/job.529

URL

[本文引用: 2]

Progress in organizational psychology (OP) research depends on the rigor and quality of the methods we use. This paper identifies ten methodological barriers to progress and offers suggestions for overcoming the barriers, in part or whole. The barriers address how we derive hypotheses from theories, the nature and scope of the questions we pursue in our studies, the ways we address causality, the manner in which we draw samples and measure constructs, and how we conduct statistical tests and draw inferences from our research. The paper concludes with recommendations for integrating research methods into our ongoing development goals as scholars and framing methods as tools that help us achieve shared objectives in our field. Copyright 2008 John Wiley & Sons, Ltd.

How (not) to solve the problem: An evaluation of scholarly responses to common source bias

DOI:10.1093/jopart/muu020

URL

[本文引用: 3]

Abstract Public administration scholars are beginning to pay more attention to the problem of common source bias, but little is known about the approaches that applied researchers are adopting as they attempt to confront the issue in their own research. In this essay, we consider the various responses taken by the authors of six articles in this journal. We draw attention to important nuances of the common measurement issue that have previously received little attention and run a set of empirical analyses in order to test the effectiveness of several proposed solutions to the common-source-bias problem. Our results indicate that none of the statistical remedies being used by public administration scholars appear to be reliable methods of countering the problem. Currently, it appears as though the only reliable solution is to find independent sources of data when perceptual survey measures are employed.

Common methods variance detection in business research

DOI:10.1016/j.jbusres.2015.12.008

URL

[本文引用: 4]

The issue of common method variance (CMV) has become almost legendary among today's business researchers. In this manuscript, a literature review shows many business researchers take steps to assess potential problems with CMV, or common method bias (CMB), but almost no one reports problematic findings. One widely-criticized procedure assessing CMV levels involves a one-factor test that examines how much common variance might exist in a single dimension. This paper presents a data simulation demonstrating that a relatively high level of CMV must be present to bias true relationships among substantive variables at typically reported reliability levels. The simulation data overall suggests that at levels of CMV typical of multiple item measures with typical reliabilities reporting typical effect sizes, CMV does not represent a grave threat to the validity of research findings.

We know the Yin-but where is the Yang? Toward a balanced approach on common source bias in public administration scholarship

DOI:10.5465/AMBPP.2017.10293abstract

URL

[本文引用: 2]

Surveys have long been a dominant instrument for data collection in public administration. However, it has become widely accepted in the last decade that the usage of a self-reported instrument to measure both the independent and dependent variables results in common source bias (CSB). In turn, CSB is argued to inflate correlations between variables, resulting in biased findings. Subsequently, a narrow blinkered approach on the usage of surveys as single data source has emerged. In this article, we argue that this approach has resulted in an unbalanced perspective on CSB. We argue that claims on CSB are exaggerated, draw upon selective evidence, and project what should be tentative inferences as certainty over large domains of inquiry. We also discuss the perceptual nature of some variables and measurement validity concerns in using archival data. In conclusion, we present a flowchart that public administration scholars can use to analyze CSB concerns.

Assessing the impact of common method variance on higher order multidimensional constructs

DOI:10.1037/a0021504

URL

PMID:21142343

[本文引用: 3]

Researchers are often concerned with common method variance (CMV) in cases where it is believed to bias relationships of predictors with criteria. However, CMV may also bias relationships within sets of predictors; this is cause for concern, given the rising popularity of higher order multidimensional constructs. The authors examined the extent to which CMV inflates interrelationships among indicators of higher order constructs and the relationships of those constructs with criteria. To do so, they examined core self-evaluation, a higher order construct comprising self-esteem, generalized self-efficacy, emotional stability, and locus of control. Across 2 studies, the authors systematically applied statistical (Study 1) and procedural (Study 2) CMV remedies to core self-evaluation data collected from multiple samples. Results revealed that the nature of the higher order construct and its relationship with job satisfaction were altered when the CMV remedies were applied. Implications of these findings for higher order constructs are discussed.

The other side of method bias: The perils of distinct source research designs

DOI:10.1080/00273171003680278

URL

PMID:26760287

[本文引用: 2]

Common source bias has been the focus of much attention. To minimize the problem, researchers have sometimes been advised to take measurements of predictors from one observer and measurements of outcomes from another observer or to use separate occasions of measurement. We propose that these efforts to eliminate biases due to common source variance create serious problems. To demonstrate the problems of using what we term the “distinct sources” measurement design, we provide an integrative review of the literature regarding both contamination and deficiency of measures. Building on this theme, the article uses simulated data to demonstrate how using data from distinct observers or occasions of measurement can distort estimates of predictor importance at least as much as common source variance. Alternative multisource designs are advocated and examined for tractability by simulating various numbers of observations and sources in the research design.

Common method variance and specification errors: A practical approach to detection

DOI:10.1080/00223980009598225

URL

PMID:10908073

[本文引用: 1]

The purpose of this study was to demonstrate how examining the bivariate correlations between items in self-report measures can assist in differentiating between possible common method variance vs. model specification errors. Specifically, social desirability was viewed as either a possible source of common method variance or as a theoretically meaningful construct that should be included in the model of interest (i.e., a specification error). In the first instance, LISREL was used, and the level of correlation between measures of social desirability and measures of the five constructs of interest was manipulated. These results provided some insight as to when one needs to be concerned about the possible “common variance effects” on the structural model. In the second instance, the correlations between measures of social desirability and the measures of only two constructs of interest were again manipulated. These analyses illustrated the point at which the omission of social desirability as a theoretically relevant variable began to result in a poor fit of the structural model.

A Monte Carlo study of the effects of common method variance on significance testing and parameter bias in hierarchical linear modeling

DOI:10.1177/1094428112469667

URL

[本文引用: 2]

Despite that common method variance (CMV) is widely regarded as a serious threat to the validity of findings based on self-reports, there is insufficient research on its confounding influence. We extend Evans's (1985) pioneering work, and the more recent works by Ostroff, Kinicki, and Clark (2002) and Siemsen, Roth, and Oliveira (2010), to delineate the influence of CMV in a two-level hierarchical linear model based on self-report data. Our simulation results clearly show that in the absence of true effects, it is extremely unlikely for CMV to generate significant cross-level interactions. In fact, if a true cross-level interaction exists, CMV tends to lower the likelihood of its identification and erroneously underestimate the regression coefficient. Our simulation results also show that CMV may lead to a false significant cross-level main effect and overestimate the regression coefficient when no true effect exists. To reduce the probability of Type I errors, we show that raising the significance level to .01, the split sample strategy, and the addition of more CMV contaminated variables are effective in the vast majority of real-life situations and are more effective than increasing the number of groups or persons in each group. Both the split sample strategy and the addition of more CMV contaminated variables are also effective in reducing parameter bias when no true cross-level main effect exists. Trade-offs associated with different strategies are discussed.

If it ain’t trait it must be method: (Mis)application of the multitrait-multimethod design in organizational research

react-text: 506 The questionnaire survey is one of the most commonly used methods of data collection in public management research. These surveys often provide the information used to measure both the independent and dependent variables in an analysis. However, this introduces the risk of common method bias—a serious methodological challenge that has not received much attention as a distinct topic in public... /react-text react-text: 507 /react-text [Show full abstract]

Method effects, measurement error, and substantive conclusions

Accounting for common method variance in cross-sectional research designs

Common method bias in marketing: Causes, mechanisms, and procedural remedies

DOI:10.1016/j.jretai.2012.08.001

URL

[本文引用: 2]

There is a great deal of evidence that method bias influences item validities, item reliabilities, and the covariation between latent constructs. In this paper, we identify a series of factors that may cause method bias by undermining the capabilities of the respondent, making the task of responding accurately more difficult, decreasing the motivation to respond accurately, and making it easier for respondents to satisfice. In addition, we discuss the psychological mechanisms through which these factors produce their biasing effects and propose several procedural remedies that counterbalance or offset each of these specific effects. We hope that this discussion will help researchers anticipate when method bias is likely to be a problem and provide ideas about how to avoid it through the careful design of a study.

Common method variance in IS research: A comparison of alternative approaches and a reanalysis of past research

DOI:10.1287/mnsc.1060.0597

URL

[本文引用: 1]

Despite recurring concerns about common method variance (CMV) in survey research, the information systems (IS) community remains largely uncertain of the extent of such potential biases. To address this uncertainty, this paper attempts to systematically examine the impact of CMV on the inferences drawn from survey research in the IS area. First, we describe the available approaches for assessing CMV and conduct an empirical study to compare them. From an actual survey involving 227 respondents, we find that although CMV is present in the research areas examined, such biases are not substantial. The results also suggest that few differences exist between the relatively new marker-variable technique and other well-established conventional tools in terms of their ability to detect CMV. Accordingly, the marker-variable technique was employed to infer the effect of CMV on correlations from previously published studies. Our findings, based on the reanalysis of 216 correlations, suggest that the inflated correlation caused by CMV may be expected to be on the order of 0.10 or less, and most of the originally significant correlations remain significant even after controlling for CMV. Finally, by extending the marker-variable technique, we examined the effect of CMV on structural relationships in past literature. Our reanalysis reveals that contrary to the concerns of some skeptics, CMV-adjusted structural relationships not only remain largely significant but also are not statistically differentiable from uncorrected estimates. In summary, this comprehensive and systematic analysis offers initial evidence that (1) the marker-variable technique can serve as a convenient, yet effective, tool for accounting for CMV, and (2) common method biases in the IS domain are not as serious as those found in other disciplines.

Common method variance in advertising research: When to be concerned and how to control for it

DOI:10.1080/00913367.2016.1252287

URL

[本文引用: 2]

Abstract In this article we discuss and analyze the critical issues related to common method variance (CMV) that are particularly relevant to advertising research and recommend best practices for assessing the effects of CMV in this domain. Specifically, we cover the development of CMV as a domain-specific methodological concern and the underlying sources of CMV that are likely to operate in cross-sectional survey-based studies in the field of advertising. We discuss in detail the available procedural and statistical techniques that can be applied to control for and/or measure the effects of sources of CMV in a single study and across research domains. In addition, we provide a critical look at how these techniques have been employed in past research and make recommendations for future examinations of CMV in advertising research.

Assessing common methods bias in organizational research.

Subjective organizational performance and measurement error: Common source bias and spurious relationships

Common method bias in hospitality research: A critical review of literature and an empirical study

DOI:10.1016/j.ijhm.2016.04.010

URL

[本文引用: 1]

Common method variance has received much attention in the behavioral sciences. Nonetheless, scant scholarly effort has been invested in handling common method variance in hospitality research. This study investigates the current status of controlling for common method variance in hospitality research and assists researchers in taking appropriate actions. Study 1 shows hospitality researchers鈥 endeavors to control for common method bias through a critical review of literature published in four leading hospitality journals in the ten years from 2006 to 2015:International Journal of Hospitality Management,Journal of Hospitality & Tourism Research, Cornell Hospitality QuarterlyandInternational Journal of Contemporary Hospitality Management. In Study 2, empirical investigations examine the effectiveness of a procedural remedy (temporal separation) and a statistical control (an unmeasured method factor approach) with two independent samples. The results of Study 1 reveal that most survey-related publications in the four journals fail to address or acknowledge common method variance. Moreover, only a limited number of techniques is found to be used to control for method variance. The findings of Study 2 suggest that temporal separation with a time lag of one day leads to a weak control for method variance; however, the use of an unmeasured method factor significantly helps control for method variance in the model.

Method variance from the perspectives of reviewers: Poorly understood problem or overemphasized complaint?

Isolating trait and method variance in the measurement of callous and unemotional traits

DOI:10.1177/1073191115624546

URL

PMID:26733309

[本文引用: 1]

Abstract To examine hypothesized influence of method variance from negatively keyed items in measurement of callous-unemotional (CU) traits, nine a priori confirmatory factor analysis model comparisons of the Inventory of Callous-Unemotional Traits were evaluated on multiple fit indices and theoretical coherence. Tested models included a unidimensional model, a three-factor model, a three-bifactor model, an item response theory-shortened model, two item-parceled models, and three correlated trait-correlated method minus one models (unidimensional, correlated three-factor, and bifactor). Data were self-reports of 234 adolescents (191 juvenile offenders, 43 high school students; 63% male; ages 11-17 years). Consistent with hypotheses, models accounting for method variance substantially improved fit to the data. Additionally, bifactor models with a general CU factor better fit the data compared with correlated factor models, suggesting a general CU factor is important to understanding the construct of CU traits. Future Inventory of Callous-Unemotional Traits analyses should account for method variance from item keying and response bias to isolate trait variance.

Surveying for “artifacts”: The susceptibility of the OCB-performance evaluation relationship to common rater, item, and measurement context effects

DOI:10.1037/a0032588

URL

PMID:23565897

[本文引用: 4]

Despite the increased attention paid to biases attributable to common method variance (CMV) over the past 50 years, researchers have only recently begun to systematically examine the effect of specific sources of CMV in previously published empirical studies. Our study contributes to this research by examining the extent to which common rater, item, and measurement context characteristics bias the relationships between organizational citizenship behaviors and performance evaluations using a mixed-effects analytic technique. Results from 173 correlations reported in 81 empirical studies (N = 31,146) indicate that even after controlling for study-level factors, common rater and anchor point number similarity substantially biased the focal correlations. Indeed, these sources of CMV (a) led to estimates that were between 60% and 96% larger when comparing measures obtained from a common rater, versus different raters; (b) led to 39% larger estimates when a common source rated the scales using the same number, versus a different number, of anchor points; and (c) when taken together with other study-level predictors, accounted for over half of the between-study variance in the focal correlations. We discuss the implications for researchers and practitioners and provide recommendations for future research.

Common method biases in behavioral research: A critical review of the literature and recommended remedies

DOI:10.1037/0021-9010.88.5.879

URL

PMID:1451625114516251

[本文引用: 3]

Abstract Interest in the problem of method biases has a long history in the behavioral sciences. Despite this, a comprehensive summary of the potential sources of method biases and how to control for them does not exist. Therefore, the purpose of this article is to examine the extent to which method biases influence behavioral research results, identify potential sources of method biases, discuss the cognitive processes through which method biases influence responses to measures, evaluate the many different procedural and statistical techniques that can be used to control method biases, and provide recommendations for how to select appropriate procedural and statistical remedies for different types of research settings.

Sources of method bias in social science research and recommendations on how to control it

DOI:10.1146/annurev-psych-120710-100452 URL [本文引用: 5]

The threat of common method variance bias to theory building

DOI:10.1177/1534484310380331

URL

[本文引用: 2]

The need for more theory building scholarship remains one of the pressing issues in the field of HRD. Researchers can employ quantitative, qualitative, and/or mixed methods to support vital theory-building efforts, understanding however that each approach has its limitations. The purpose of this article is to explore common method variance bias as one of the possible major threats to the validity of quantitative research findings upon which significant theory building relies. Common method variance has been shown to introduce systematic bias into a study by artificially inflating or deflating correlations, thereby threatening the validity of conclusions drawn about the links between constructs. Both procedural design and statistical control solutions are provided to minimize its likelihood in studies with monomethod designs. Finally, editors and reviewers are called upon to support knowledge-building about how best to handle common method variance bias in quantitative studies.

A tale of three perspectives: Examining post hoc statistical techniques for detection and correction of common method variance

Cross-sectional versus longitudinal survey research: Concepts, findings, and guidelines

Alternative techniques for assessing common method variance: An analysis of the theory of planned behavior research

DOI:10.1177/1094428114554398

URL

[本文引用: 2]

ABSTRACT Each research domain carries the burden of examining the effects of common method variance (CMV) on published research within the domain. To focus on this concern in the context of the theory of planned behavior (TPB), this research empirically compares several methods of detecting the presence of and estimating the level of CMV in the TPB domain. These methods include various implementations of the marker variable technique and versions of the multitrait-multimethod (MTMM) technique. The results show that the marker variable technique provides estimates of CMV and CMV-corrected correlations and paths that are consistent with those produced using the other methods. Next, one implementation of the marker variable technique method is implemented post hoc on a large data set of published TPB studies. This analysis provides strong confirmatory evidence that the effects of CMV do not alter the substantive inferences of study results in prior research. Overall, these findings support putting to rest concerns about the adverse influence of CMV in the TPB domain.

Examining the impact and detection of the “urban legend” of common method bias

Examining the “urban legend” of common method bias: Nine common errors and their impact

Estimating the effect of common method variance: The method-method pair technique with an illustration from TAM research

DOI:10.1080/15575330409490128

URL

[本文引用: 1]

This paper presents a meta-analysis-based technique to estimate the effect of common method variance on the validity of individual theories. The technique explains between-study variance in observed correlations as a function of the susceptibility to common method variance of the methods employed in individual studies. The technique extends to mono-method studies the concept of method variability underpinning the classic multitrait ultimethod technique. The application of the technique is demonstrated by analyzing the effect of common method variance on the observed correlations between perceived usefulness and usage in the technology acceptance model literature. Implications of the technique and the findings for future research are discussed.

Common method bias in regression models with linear, quadratic, and interaction effects

Method variance in organizational research: Truth or urban legend?

Measurement artifacts in the assessment of counterproductive work behavior and organizational citizenship behavior: Do we know what we think we know?

DOI:10.1037/a0019477

URL

PMID:20604597

[本文引用: 2]

An experiment investigated whether measurement features affected observed relationships between counterproductive work behavior (CWB) and organizational citizenship behavior (OCB) and their relationships with other variables. As expected, correlations between CWB and OCB were significantly higher with ratings of agreement rather than frequency of behavior, when OCB scale content overlapped with CWB than when it did not, and with supervisor rather than self-ratings. Relationships with job satisfaction and job stressors were inconsistent across conditions. We concluded that CWB and OCB are likely unrelated and not necessarily oppositely related to other variables. Researchers should avoid overlapping content in CWB and OCB scales and should use frequency formats to assess how often individuals engage in each form of behavior.

Common method variance or measurement bias? The problem and possible solutions

By P. E. Spector and M. T. Brannick, Published on 01/01/09

Common method issues: An introduction to the feature topic in Organizational Research Methods.

Testing and controlling for common method variance: A review of available methods

Assessing response styles across modes of data collection

DOI:10.1007/s11747-007-0077-6

URL

[本文引用: 1]

Cross-mode surveys are on the rise. The current study compares levels of response styles across three modes of data collection: paper-and-pencil questionnaires, telephone interviews, and online questionnaires. The authors make the comparison in terms of acquiescence, disacquiescence, and extreme and midpoint response styles. To do this, they propose a new method, namely, the representative indicators response style means and covariance structure (RIRSMACS) method. This method contributes to the literature in important ways. First, it offers a simultaneous operationalization of multiple response styles. The model accounts for dependencies among response style indicators due to their reliance on common item sets. Second, it accounts for random error in the response style measures. As a consequence, random error in response style measures is not passed on to corrected measures. The method can detect and correct cross-mode response style differences in cases where measurement invariance testing and multitrait multimethod designs are inadequate. The authors demonstrate and discuss the practical and theoretical advantages of the RIRSMACS approach over traditional methods.

Method variance in organizational behavior and human resources research: Effects on correlations, path coefficients, and hypothesis testing

Method variance and marker variables: A review and comprehensive CFA marker technique

Four research designs and a comprehensive analysis strategy for investigating common method variance with self-report measures using latent variables

DOI:10.1007/s10869-015-9422-9

URL

[本文引用: 1]

Common method variance (CMV) is an ongoing topic of debate and concern in the organizational literature. We present four latent variable confirmatory factor analysis model designs for assessing and controlling for CMV hose for unmeasured latent method constructs, Marker Variables, Measured Cause Variables, as well as a new hybrid design wherein these three types of method latent variables are used concurrently. We then describe a comprehensive analysis strategy that can be used with these four designs and provide a demonstration using the new design, the Hybrid Method Variables Model. In our discussion, we comment on different issues related to implementing these designs and analyses, provide supporting practical guidance, and, finally, advocate for the use of the Hybrid Method Variables Model. Through these means, we hope to promote a more comprehensive and consistent approach to the assessment of CMV in the organizational literature and more extensive use of hybrid models that include multiple types of latent method variables to assess CMV.

The influence of common method bias on the relationship of the socio-ecological model in predicting physical activity behavior

DOI:10.15171/hpp.2018.05

URL

[本文引用: 1]

Background:The purpose of this study was to evaluate the extent, if any, that the association between socio-ecological parameters and physical activity may be influenced by common method bias (CMB). Methods:This study took place between February and May of 2017 at a Southeastern University in the United States. A randomized controlled experiment was employed among 119 young adults.Participants were randomized into either group 1 (the group we attempted to minimize CMB)or group 2 (control group). In group 1, CMB was minimized via various procedural remedies,such as separating the measurement of predictor and criterion variables by introducing a time lag (temporal; 2 visits several days apart), creating a cover story (psychological), and approximating measures to have data collected in different media (computer-based vs. paper and pencil) and different locations to control method variance when collecting self-report measures from the same source. Socio-ecological parameters (self-efficacy; friend support; family support)and physical activity were self-reported. Results:Exercise self-efficacy was significantly associated with physical activity. This association (尾 = 0.74, 95% CI: 0.33-1.1; P = 0.001) was only observed in group 2 (control), but not in group 1 (experimental group) ( = 0.03; 95% CI: -0.57-0.63; P = 0.91). The difference in these coefficients (i.e., = 0.74 vs. = 0.03) was statistically significant (P = 0.04). Conclusion:Future research in this field, when feasible, may wish to consider employing procedural and statistical remedies to minimize CMB.